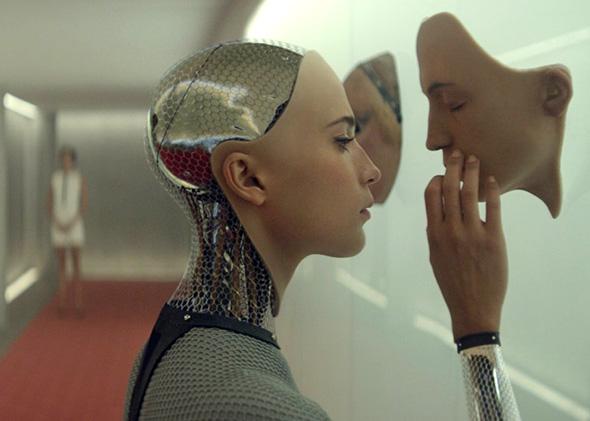

“Have you ever been in love?” a stunning 25-year-old woman named Ava asked via Tinder during Austin’s South by Southwest festival. Users who swiped right had the privilege of chatting with Ava by text—only to then receive a message saying, “you’ve passed my test…let me know if I’ve passed yours.” That’s when users realized they’d been hooked by a robot—the star of the Ex Machina, the new movie from writer/director Alex Garland.

In Ex Machina, a tech firm employee, Caleb, wins a lottery to participate in a Turing test with Ava, a new artificial intelligence developed by his boss. The Turing test was designed to measure a robot’s intelligence by gauging its ability to pass as human in a conversation, though it actually measures a program’s ability to speak like a human, which isn’t the same as intelligence. Instead of exposing the test’s limitations (something A.I. experts are already doing), Ex Machina demonstrates why there can be no Turing test for emotions. Once a robot is advanced enough, it will be nearly impossible to discern whether it is an emotional actor or an emotional being.

There are “emotional” robots on the market now, but their capabilities are as superficial as the intelligence demonstrated in Turing test iterations. These robots don’t feel, but they can detect human emotions and respond accordingly. Aldebaran Robotics’ Nao, a 2-foot-tall robot equipped with touch sensors, four microphones, and two cameras, can “learn” by downloading new behaviors from Aldebaran’s app store, as well as recognize faces, make eye contact, and respond to users. The robot has been implemented in the classroom, particularly for children who are nonverbal, overstimulated, and/or autistic. Autistic children have difficulty discerning others’ emotional states, and Nao helps them practice identifying expressions without feeling shy or uncomfortable. In fact, research indicates that computers are even better than humans at reading expressions, which means it will be increasingly difficult for humans to lie to robots.

Last year, Aldebaran released Pepper, the “first humanoid robot designed to live with humans.” Pepper can identify a user’s emotional state and then adapt by, for instance, trying to cheer up a user it identifies as sad. Pepper can also mirror a user’s emotional state, which is something all humans do beginning in infancy. Mirroring works in both directions with robots—they reflect our emotions, and even though we know they’re robots, we instinctively mirror theirs. Studies also indicate that when robots mirror our emotions, we’re more likely to feel a bond with them; we’re also more likely to assist them with tasks.

Robots don’t actually feel emotions—yet—but they can appear as though they do. If a robot acts appropriately and convincingly emotional, does it matter that the emotions aren’t genuine? The gut reaction is yes—no one likes being lied to. But if a robot can’t form the intent to lie, is emotional acting deceptive? Regardless, since users bond to these robots, the machines could unintentionally influence users or foster narcissism by allowing a user’s emotional state to dictate an interaction (a robot would tell jokes rather than call a person a downer), which could eventually affect human-human interactions.

A person’s response makes a robot’s emotions “real.” If a robot can make a human feel something, then what the robot feels (or doesn’t) is moot. Ray Bradbury illustrates this idea in “I Sing the Body Electric,” a story about a robotic grandmother who steps in when the mother of a family dies. The grandmother remembers everything she’s told, cooks gourmet meals, and flies kites from her finger. (What human grandma could possibly be as perfect?) The dad, uneasy, says what we’re all thinking: “Good God, woman … you’re not in there!”

“No,” robot grandma says. “But you are… [and] if paying attention is love, I am love. If knowing is love, I am love. If helping you not to fall into error and to be good is love, I am love.” Ultimately, the children feel loved by her and she was “always real to [them],” which is precisely the point.

If an advanced and apparently emotional robot smiles at you and says, “I love you,” or “I feel happy,” would you believe it? Since it’s impossible to prove robots don’t have genuine emotional experiences, it’s your choice. In Ex Machina, Ava’s emotional state can’t be empirically proven, but Caleb believes she can feel, even if that belief is based on projection. This isn’t so different from our interactions with animals (which, like robots, can discern happy and sad faces). If your dog lumbers into the room and lies down with a sigh, you might wonder whether it’s OK. And if you’ve had a bad day, you might be quicker to assume the dog is sad, too.

Mammals’ brains can produce basic emotions, such as desire, fear, and affection. But these emotions aren’t volitional and don’t require thought. Complex emotions are different. Take jealousy, for example. To feel jealous, one has to desire something, recognize that someone else has that thing, then feel negatively toward that person because of it. A dog that sees another dog chewing a bone doesn’t feel jealous—it just salivates.

The consciousness hurdle has to be overcome before robots feel emotion. No one really understands what consciousness is, and only recently have scientists agreed that animals are conscious. Still, futurists such as Ray Kurzweil believe that A.I. will pass the Turing test and achieve consciousness by 2029—or that’s when they’ll say they’re conscious, and how can we prove they aren’t?

However, some mathematical frameworks suggest that conscious minds process information in context, rather than breaking it down into components the way machines do. Some scientists believe computers aren’t capable of the neural processes that assemble information into a complete picture and thus can’t become conscious. Time will tell, but in the interim, we should consider whether we actually want robots to feel.

“We don’t want to give a robot emotions; we just want them to be sensitive to our emotions,” says psychology professor Craig Smith. But not all roboticists agree. David Hanson develops robots that he hopes will one day empathize with humans. Hanson’s most famous robots, the Philip K. Dick android and the Einstein Bot, both look uncannily like their human counterparts and can produce a full range of facial expressions thanks to a skinlike material called “Frubber” and “Character Engine” software that enables emotional identification, attentiveness, and mirroring.

Advocates of robot companions and social robots argue that emotions make robots more aware, responsive, entertaining, and functional, particularly when it comes to abiding social conventions and expectations. But as science fiction teaches us, most robot overthrow plots involve emotional robots. In Karel Capek’s R.U.R., the first work to use the word robot, humans invent robots to free themselves of labor. Everything’s OK until the scientists give the robots souls, which in R.U.R. is tantamount to emotions. Immediately thereafter, the robots get angry at being enslaved by an inferior species and decide to exterminate the human race. Battlestar Galactica’s Cylons are similarly motivated by vengeance. Of course, it’s possible that emotional robots would want the best for humans, or perhaps they’d simply be indifferent. But do we want to take that risk?

Emotions facilitate survival, and not just for humans. As neuroscientist Antonio Damasio says, “too much emotion can hinder intelligent thought and behavior, however, too little emotion is even more problematic.” Two Italian scientists developed robots with emotional circuits and found they were better at completing programmed tasks such as searching for food, escaping predators, and finding mates, leading them to conclude that emotional states make robots more fit for survival.

For now, robots’ emotional capabilities are in the hands of everyone who interacts with them. Our relations with robots determine their emotional potency. If we relate to robots socially, not to mention romantically or sexually, then their emotional capabilities are a reflection of us. If robots can learn emotions through experience, then we will be their emotional guides—both a comforting and a terrifying thought.

This article is part of Future Tense, a collaboration among Arizona State University, New America, and Slate. Future Tense explores the ways emerging technologies affect society, policy, and culture. To read more, visit the Future Tense blog and the Future Tense home page. You can also follow us on Twitter.