In 2007, General Motors ran a commercial featuring an assembly-line robot that loses its job after dropping a screw. The robot doesn’t look human—it’s a mechanical arm and some hydraulics mounted on a metal body with wheels.

But it definitely felt human, enough that viewers’ concerned and angry response compelled GM to pull a scene of the robot throwing itself off a bridge. It didn’t need a face, two arms, and two legs for people to empathize with its sad robot noises and string of dissatisfying jobs. It just needed to tilt its “head” the right way, and people could impress all sorts of human qualities upon it, just like we do with inanimate objects every day.

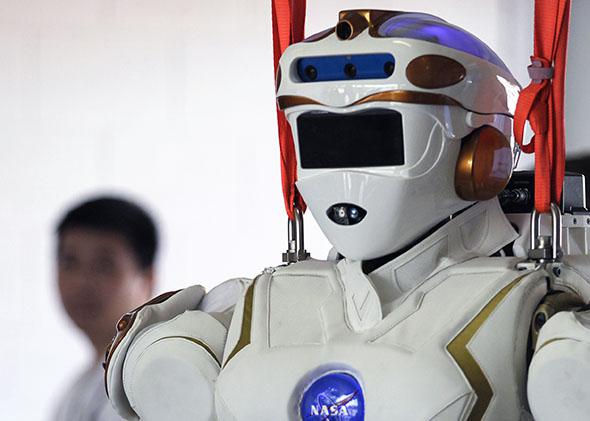

One of those qualities is gender, and unless given specific cues otherwise, most people faced with a robot tend to default to male. NASA’s robotic astronaut assistant Robonaut, for example, was designed to be gender-neutral. But that’s not how most people perceived it.

“People inevitably would assign a gender to the robot,” says Nicolaus Radford, a former NASA roboticist and one of the lead engineers on Robonaut. “I would say 99 out of 100 are quicker to identify a robot and use a ‘he’ pronoun. You know, ‘Tell me what he can do!’ ”

It’s not just an issue of language. In the not-too-distant future, robots will be social beings upon which we can heap all kinds of preexisting social constructs. Already, robots are helping with tasks like caring for the elderly and teaching—both fields traditionally associated with women. Research on human-robot interactions is revealing that gender plays a big role in how people perceive, communicate with, and treat robots, much like it does with humans. And a lot of what we’re bringing over to our technological companions of the future is old, tired stereotypes.

In 2009, for example, Julie Carpenter, a social science researcher at the University of Washington, asked 19 students to watch videos of two robots, one that was modeled after an adult woman and another that looked like a taller Wall-E with arms. The students then filled out surveys and answered questions about how humanlike and friendly the robots seemed, and whether they’d be comfortable with the robots in their homes.

Overall, the students expressed a preference for the female robot, though in this case whether it was simply a preference for a more human robot is unclear. But the students’ responses were telling. When asked to describe the robot, one student—gender unspecified—answered:

Well, it’s female, so that’s a positive. … The feminine form is typified as being weak or fragile in some form, but really inviting and warm and more interactive. Whereas if it were a male robot and masculine design, then there’s a safety issue of, “OK. I gotta protect myself possibly.”

Other research has yielded similar results showing that a robot’s perceived gender can change how a person interacts with it. In 2005, researchers asked students to explain dating norms to a robot that had either a feminine voice and pink lips or a masculine voice and gray lips, which the majority of students identified as female and male, respectively.

The students then reported that the robot of their own gender knew more about dating, and gave wordier advice to the lovelorn bot of the opposite gender. And in a 2009 experiment at the Museum of Science in Boston, men were more likely to donate money to a robot with a female voice than with a male voice, even though nothing else about the robot changed.

“Robots have some traits, such as movement or their morphology, that trigger our tendency to attribute some agency and intelligence to robots, even if we know better,” Carpenter says. “So right now, we are developing some of our cultural norms for interaction with robots in different contexts.”

The creators of robots, then, have both a fantastic opportunity and a very real responsibility to consider what gender means as they design the machines that are becoming increasingly present in our hospitals, our schools, our homes, and our public spaces at large. Some researchers suggest gender stereotypes could be beneficial for robot interfacing, by, for example, capitalizing on our tendency to be more comfortable with women as caretakers. More feminine home health care robots could put patients at ease. But that might be a dangerous path, one that’s antithetic to the decades of ongoing work to bring women into fields like business, politics, and particularly science and technology. If robots with a feminine appearance are built only when someone wants a sexbot or an in-home maid—leaving masculine robots with all the heavy lifting—what does that say to the flesh-and-blood humans who work with them?

Radford, for one, was not on board with that message. So when he was put in charge of a team at NASA’s Johnson Space Center to build a rescue robot for the Defense Advanced Research Projects Agency Robotics Challenge, he decided it was time for a strong, utilitarian bot to be female.

“There’s just no reason that we can’t have robots that don’t have more of the appearance of our workforce,” Radford says. “There’s no reason that we don’t have robots that are inspired by the male form and robots that are inspired by the female form.”

In order to compete in the competition, the robot needed to complete tasks that would demonstrate its ability to help humans in disaster situations—drive a car, navigate debris, climb stairs. Radford and his team modeled their robot after women’s first-responder gear and armor worn by women throughout history. They named her Valkyrie, a tribute to both a NASA robot built for a competition called FIRST and the XB-70 Valkyrie prototype bomber built by NASA and the Air Force in the ’60s. It’s also a nod to the Valkyries of Norse mythology: female warriors who decided who among the battlefield dead could pass on to Valhalla.

Building a utilitarian female robot required an intentionality and attention to detail that went beyond the usual design process. Radford and his team hired a graphic designer to help construct clay shells that were then modeled and 3-D-printed, Radford says, to “get the form of the robot that right.” They brought in a French physicist-turned–fashion designer to help work out the overall appearance and style. Radford kept the robot’s gender quiet, even from most of his own team, for months.

“We were doing something so different,” Radford says, “that we wanted to make sure we got it as right as we could.”

Valkyrie’s resulting womanhood is subtle enough that on tours and demonstrations, some viewers still referred to Valkyrie as “he,” according to Radford. A few members of the press speculated that Val was a she, largely owing to the “extra volume” in the chest area. NASA’s official stance is that robots, Valkyrie included, don’t have gender, and that Valkyrie’s appearance originated from engineering decisions, including a need to move the robot’s 30-pound battery pack to its torso to balance its center of gravity. Once that resulted in some feminine—ahem—attributes, the team decided to run with it. “Although it looks like it does have a female form, it’s more a result of form and function versus actual design to be a female or a male,” says Jay Bolden, a public affairs officer with NASA’s Johnson Space Center

To hear Radford tell it, by denying Valkyrie’s gender, NASA missed a big opportunity to reach out to the women and girls who could one day build their own robots. (It also makes them “a bunch of weenies.”) As proof, he points to one person he knows who particularly appreciated Valkyrie: his 7-year-old-daughter. “She absolutely was in love with this robot,” Radford says. “It was a major source of inspiration for her. She talked about it all the time. She drew pictures of Valkyrie.”

All humans understand the world and their place in it in part by seeing how others who look like them are treated—how they talk, what they wear, what they do when they grow up. We’re not bound to those images, but having lots of types of people in lots of different roles opens the options for everyone. And if we’re going to be creating a new generation of machines to interact with as frequently, and as intimately, as we do our co-workers and friends, we should not cage them in with the same unimaginative and restrictive gender expectations that we humans are still struggling to free ourselves from today.

This article is part of Future Tense, a collaboration among Arizona State University, New America, and Slate. Future Tense explores the ways emerging technologies affect society, policy, and culture. To read more, visit the Future Tense blog and the Future Tense home page. You can also follow us on Twitter.