We all have, consciously or otherwise, a vision of perfect education: two people, a master and student, sitting together, talking. The classic Aristotelian tutorial model. When the king of Macedon wanted to educate his son Alexander, he simply hired the smartest person in the world.

The first information technology to alter that model was the written word. Like all ed-tech innovations, it was highly controversial at the time. Socrates was deeply skeptical of writing.

In the Phaedrus, Socrates said that written words “will create forgetfulness in learners’ souls, because they will not use their memories; they will trust to the external written characters and not remember of themselves.” Words, he believed, are “an aid not to memory, but reminiscence,” that give people “not truth, but only the semblance of truth; they will be hearers of many things and will have learned nothing; they will appear to be omniscient and will generally know nothing; they will be tiresome company, having the show of wisdom without the reality.”

As was usually the case, Socrates had a point. “Tiresome company, having the show of wisdom without the reality”—that sounds like most of the book-read, college-educated pedants I’ve had the displeasure of meeting. Compared with real, live people, written words are static, standardized, and unresponsive. Or, to use a modern education policy insult, a “one-size-fits-all solution.”

But in focusing on what was lost in translation with technology, Socrates failed to fully appreciate what might be gained. This is a common mistake. Writing extended the distance words could travel, from within earshot of a speaker to anywhere pieces of paper could be carried. Writing became a storage medium, making words potentially immortal.

Writing brought the educational value of words to more people and thus reduced the cost of words for each. Only the king of Macedon could hire the smartest man in the world as a tutor, because he was expensive, and there was only one of him. The distance, scale, and cost of education were changed for the better by technology.

Writing did one more thing. It improved the quality of education. We tend to think of those two people, master and student, talking, as the ideal educational environment. But it isn’t always. Through revision, collaboration, and extended work over time, writing allowed people to create and communicate structures of thought that weren’t possible in oral conversation. Writing made education much better. It was arguably the last technology to do so—until now.

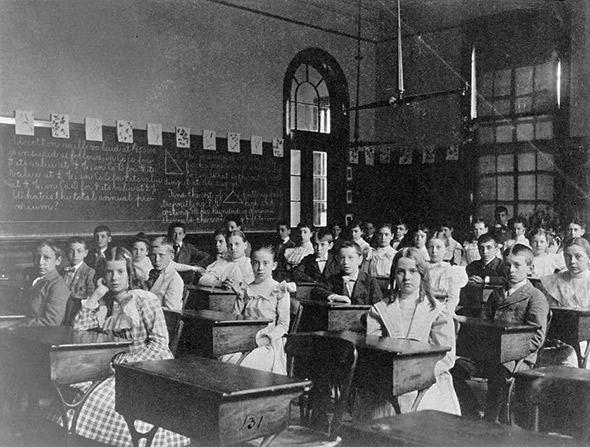

As time passed, every new information technology was adapted to education, never without controversy. The printing press greatly accelerated the benefits of distance, scale, and cost—more people in more places for less money. According to scholar George P. Landow, this greatly alarmed the faculty of the University of Paris, the second major university to emerge in the pre-Renaissance era, after the University of Bologna. It was expensive to make copies of words before the printing press. To save the cost of paper, scribes bunched all the words together, without spaces in between. Learning to read such text was skill unto itself, which students practiced by reading out loud, under the supervision of a teacher. Printed books with these newfangled “spaces between words” allowed students to read silently, which closed a window onto the student’s thought process.

Like Socrates before them, the University of Paris professors had a point. The central challenge of teaching is making learning visible and responding accordingly. Printing technology made that harder. But they, too, failed to appreciate the compensatory advantages of more abundant books.

Next, the postal service created an open, egalitarian, publicly financed communications network—the Internet of its time. This more or less solved, forever, the problem of moving words over long distance. In 1639, the very same year that America’s first college was named after its benefactor, John Harvard, a nearby tavern in Boston was designated as the official repository for mail coming in and out of the British colonies. And so colonial readers of the Boston Gazette would read advertisements claiming that “Persons in the Country desirous to Learn the Art [of shorthand] may by having the several Lessons sent Weekly to them, be as perfectly instructed as those that live in Boston.”

The claim of “as perfectly instructed” might seem suspect, on its face. Then again, different subjects require very different methods of educational interaction, and shorthand is unusually text-dependent. And in fact there is an extensive research literature demonstrating how students learn just as well at a distance as in person.

Over time, the advances of information technology would increase the kinds of educational interactions that could be offered to more people in more places for less money. Radio brought spoken words, television and film brought moving pictures. And now the Internet has collapsed the remaining barriers of space, time, and cost to the point of nothingness. Any words, any sounds, any pictures, to anyone, anywhere, instantaneously, at a marginal cost that is indistinguishable from zero. It’s breathtaking.

Most of the online courses that currently exist, including the MOOCs that have received so much attention, largely take advantage of the recent developments I’ve just described—broadcasting educational words, sounds, and images at a scale and cost without precedent. We should not diminish that accomplishment. It represents an amazing step forward, in and of itself.

Yet what most of those courses haven’t managed is using information technology to improve the quality of education—something that hasn’t been done, arguably, since the invention of the written word.

But they will, soon, for two reasons. First, because modern information technology allows for unprecedented interactivity and inter-personal connection, the formation of communities of learners on a global scale. Second, because the ability of computers to process information means that we can, for the first time, replicate and improve upon fundamental processes of learning.

Think again about our supposedly ideal model: master and student, sitting together, talking. It’s not as perfect as it seems. Even the smartest person in the world knows far less than what he doesn’t know. And the implicit process of diagnosis in the tutorial model, in which the teacher listens to the student, makes judgments about what they’ve learned, and responds accordingly, is bound by essentially human limitations. It is very difficult to see inside another person’s mind, to provoke and inspire their learning in just the right way. We value people who are great at this precisely because they are always so rare.

It is no coincidence that all of people leading the major MOOC providers—Sebastian Thrun and Peter Norvig at Udacity, Andrew Ng at Coursera, Anant Agarwal at EdX—come from or near the academic disciplines of artificial intelligence and machine learning. Or that some of the best designed online courses, whose effectiveness has been proven by rigorous research, come from institutions like Carnegie Mellon and Stanford, based on decades of research into cognitive psychology, neuroscience, human-computer interaction, and artificial intelligence.

The amount of data that will be generated by millions of people engaged in digital learning environments will yield insights that no single educator can obtain. The AI-based cognitive tutors that are already being used in online courses today will only get better over time. This is not science fiction. It is the unevenly distributed future, rapidly approaching the universal present.

Traditional colleges and universities, predictably, are following in the footsteps of their predecessors by badly underestimating the net educational benefits of information technology. These are organizations designed in the 19th century under conditions of information scarcity that simply no longer exist. And they are in a profound state of denial about all of this.

To start, they grossly underestimate how much of the education they currently provide is already wholly replaceable by a simple broadcast model. Every aspect of the standard lower-division lecture course (which happens to be hugely profitable for colleges) can be perfectly replicated online today and distributed at no marginal cost. It’s trivial, and it’s already been done. Any of you can, for example, take MIT’s entire mandatory undergraduate science and math curriculum, exactly as the students in Cambridge, Massachusetts, take it, on edX, for free.

If you can get colleges to admit this, they’ll fall back on assertions that are, unsurprisingly, rooted in the intangible, the ineffable, the unprovable and the “you just have to be here to understand.” A few things to keep in mind about this:

First, whatever the benefits of things like the campus environment and interpersonal interaction with professors may be—and to be clear, they are certainly real—colleges have absolutely no evidence that would meet their own standards of scholarship credibly estimating or quantifying the size of those benefits. None. If you don’t believe me, ask them some time.

Second, those remaining benefits have to be understood in relation to cost. As we all know, the cost of college is rising at an alarming rate. Even if colleges can prove how much value they added on top of what information technology can provide for free, that still leaves open the question of whether that value is worth the prices they charge.

Third, artificial intelligence and machine learning will inevitably continue to improve and steadily eat away at whatever plausible remaining value proposition traditional colleges may have.

All of which means we’re in an exciting time to be discussing technology and the future of higher education— the first time since the age of Socrates and Aristotle when information technology will make education not just more abundant but more effective for people around the world.

This article was adapted from remarks Kevin Carey delivered on April 30, 2014, at the Future Tense event “Hacking the University: Will Tech Fix Higher Education?” Future Tense is a collaboration among Arizona State University, the New America Foundation, and Slate. Future Tense explores the ways emerging technologies affect society, policy, and culture. To read more, visit the Future Tense blog and the Future Tense home page. You can also follow us on Twitter.