My one-man campaign against fear started on a chilly evening in San Francisco. I was discussing the future and technology as part of a book tour, and I only had time to answer one more question. From the stage, I called on a guy in his 50s. When he started talking, it quickly became obvious that he was quite upset. He was living in fear that texting and the Internet were stealing his girls, about 12 and 14, from him and his wife.

“They spend all their time like this!” he said, pretending to hunch over a smartphone. “I’m worried they won’t be able to communicate with normal people. How will they ever get a job?” Technology, he feared, was stealing his daughters—and I represented those insidious smartphones. He was so upset that he was yelling by the end of his question, and security began closing in on the man.

I understood why he was fearful, I told him: because he loves his daughters and wants them to have a good future. The fact is his daughters’ smartphones just haven’t been around as long as TV; we haven’t yet established norms, or language, for what’s socially acceptable and what’s off limits. Gadgets and technology may change quickly, but people and our behavior does not. In 20 years, his fear about smartphones taking his daughters will seem quaint. We are currently in the middle of coming to grips with what these devices mean to us. This isn’t a technology problem; it’s a broader cultural conversation about what kind of future we want to live in. We need to have more conversations in our families, in our offices, and in the media about what we want and what’s acceptable

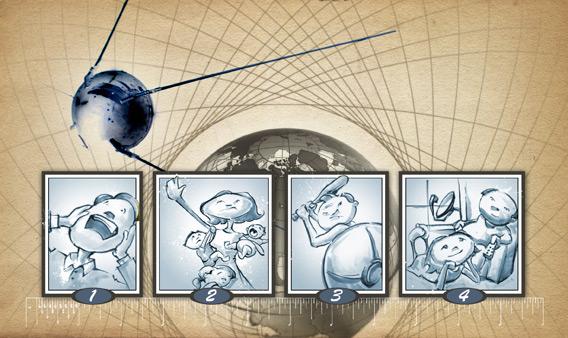

Over the last two years, I’ve been studying fear and technology—or the future of fear. I’ve seen some patterns for how fear and technology move into our lives. It can be broken into four stages.

Stage 1: “It will kill us all!!”

Reaction upon first hearing about some early scientific or technology research being conducted by a university, corporation, or government. Usually the coverage appears in a technology or science magazine with a snappy headline but little substance. Next comes a clever screenwriter who turns the wee bit of science into a science fiction popcorn thrill ride that literally shows us how the world will end but contains even less real science.

Synthetic biology is one example of a technology currently in Stage 1. An editor at a large science magazine once told me that he loves the nascent science because no story can go from obscure university research paper to global apocalypse in fewer than 500 words like synbio. And it’s true: When people have no context, they hear about a new technology and they quickly extrapolate it out into a sci-fi-infused end of days. James Cameron’s Terminator story cycle, although fun, has really left a deep and fearful scar across popular culture’s understanding of artificial intelligence. (You would be surprised at how many very serious questions other futurists and I get about the robot apocalypse.)

Stage 2: “It will steal my daughter!!”

Reaction upon the dramatic success of said technology following its introduction to the market. This is usually where the moral panic sets in, perhaps brought on by a conversation in a bar/church/school-pickup line or a quick story on the nightly news telling you that you need to be afraid (typically accompanied with video of children walking home from school and using the technology).

Stage 2 is all about context. The technology is still unfamiliar, but now it has hit the market and invaded our lives, taking clear and malicious aim at all the things we love and care for. The angry father in San Francisco is the perfect example of that. He was struggling to make sense of a technology he didn’t completely understand and the affect it might have on the people he loved.

At this time, I believe we are witnessing smartphones move from Stage 2 to Stage 3. What is the indicator for this grand cultural shift? Dilbert. Popular culture is my barometer for technologies’ movement through the four stages, and Scott Adams’ comic strip is an excellent indication of tech trends and ideas as they move from the über-geeky to the edge of the mainstream. (Right now, about 33 percent of adult American cellphone owners have a smartphone, according to Pew.) On Dec. 17, Adams ran a strip about smartphones and fear. Adams’ technically incompetent Pointy-haired Boss explains to the evil Catbert that he doesn’t trust his new smartphone because it understands spoken language and he’s worried that it has its own agenda. Just after Catbert tells the boss that he’s just being paranoid, the smartphone verbally demands to be recharged or it will delete all the boss’s contacts.

Humor is what takes us from Stage 2 to Stage 3; humor gives us the chance to laugh at the very real fear—that the technology is becoming too smart, that we will lose the ability to function without it—inside us.

Stage 3: “I’ll never use it!!”

Reaction upon receiving the said technology as a Christmas or birthday gift after the boogeyman of doom has slowly faded. Soon after insisting that he/she will never use this thing, the person may realize how useful and/or fun it can be.

Stage 3 occurs when the early adopters—those who lined up for the iPhone, for example—take matters into their own hands and begin forcing their more technologically fearful friends and family to catch up by giving the hot new gadget as a gift. (See the Seinfeld episode in which Jerry bought his father an expensive personal organizer, which Morty saw as a tip calculator.) Typically, there is a bit of denial and rejection—but then they see the magic. For some, it’s the iPhone multi-touch screen. For others, it’s getting movies and music on the Web. For me, it was playing Bezerk on the Atari 2600.

This holiday season, Stage 3 technologies lined big-box stores and the pages of online retailers. This year, it was the iPad 2 and the Kindle Fire.

Stage 4: “What are you going on about?”

As people begin using the technology in their daily lives, they forget about the fear. I would humbly like to submit Johnson’s Addendum to Arthur C. Clarke’s Third Law: “Any sufficiently advanced technology is indistinguishable from magic until about two weeks of using the technology, upon which time it becomes mundane.”

This brings us to Stage 4; the most boring and anticlimactic end to the story. For me as a futurist, this is the most fascinating and amazing stage in the process. When a technology becomes mundane, it gets absorbed into the fabric of our lives and the history of our culture. This is true success for any technology development. Though their representatives might deny it, Google and Apple actually strive to be dull, not cutting-edge. Once a company’s product becomes ordinary, it has been knit into our lives and history.

No technology typifies the four stages of technology adoption more than the once terrifying but now humble satellite. The 20th century saw the satellite rip though each stage of technology adoption, beginning with world-ending horror and ending up as blissfully commonplace.

Stage 1: The science fiction author Arthur C. Clarke is often credited with inventing the idea of the geosynchronous satellite in his 1945 paper published in Wireless World magazine. But the first mention of satellite station came earlier, in 1923, with The Problem of Space Travel: The Rocket Motor by Herman Potočnik, aka Hermann Noordung. But those who were neither sci-fi fans nor rocket scientists never thought of an object orbiting the Earth until Oct. 4, 1957.

The Soviet launch of Sputnik 1 kicked off the space race and brought satellite technology, previously a high-tech niche, into headlines. If you lived in America at the time, the satellite launch also signaled the end of the world. The Washington Post headline for Oct. 7, 1957, proclaimed “Sputnik Could Be Spy-in-the Sky” In Danse Macabre, Stephen King captured that moment: He was sitting in a movie theater in Stratford, Conn., and watching Earth vs. the Flying Saucers when the movie turned off and the house lights came up. The theater manager came onstage and in a trembling voice told the audience of kids that the Soviets had been the first to launch a satellite into orbit. For the 10-year-old King, it signaled the first real terror of his life.

Quick on the heels of Sputnik, enterprising film director and producer Roger Corman released War of the Satellites (1958) to capitalize on America’s growing anxiety. But true to Stage 1 in our process of technology adoption, the film starts with a thin shred of science, the mysterious destruction of an American satellite, but quickly turns into an outer-space thriller with aliens trying to keep humans from venturing into space.

Through the ’60s and ’70s, satellites moved to Stage 2. In this case, the fear that the technology would steal daughters was flavored with doomsdayism. The winner of the U.S.-Soviet battle for space supremacy, it was understood, would also determine the technological master of Earth. Americans were particularly worried their children weren’t smart enough to keep pace with the Russians. Satellites became the weapon of choice for warring counties and menacing super-villains. Plots from Superman comics (The Witch of Smallville) to James Bond movies (Moonraker, Diamonds Are Forever) hinged on satellites that were poised to attack our country and destroy or way of life. No family was safe.

Illustration by Winkstink.

By the 1980s, however, it became obvious that satellites were not going to bring about the apocalypse, nor were they going to destroy our very way of life. Satellite technologies transition from Stage 2 to Stage 3 was aided by humor. The 1985 satirical comedy Real Genius, starring Val Kilmer and directed by Martha Coolidge, had satellites not destroying our families but used to pull off a college prank: using a laser from a satellite to pop enough popcorn to fill a house.

On June 17, 1994, satellite technology went mainstream. DirecTV launched its American broadcast satellite service from El Segundo, Calif. The uptake of satellite was slow initially, but with such offerings as the NFL Sunday Ticket and the NASCAR Hot Pass, rabid sports fans all over the world gain access to their favorite teams and kept them connected to home. Anyone who has been able to watch a Pittsburgh Steelers game in a city like Dubrovnik, Croatia, has experienced the magic wonder of satellite technology.

Today, firmly in Stage 4, we use satellite technology every day not only to watch TV but to make phone calls and get directions in our cars. We don’t stop and ponder the magnificent innovations that have gotten us from Sputnik to making a phone call wherever we might be. The technology that once taught horror writer Stephen King what true horror real was is now commonplace.

This article arises from Future Tense, a collaboration among Arizona State University, the New America Foundation, and Slate. Future Tense explores the ways emerging technologies affect society, policy, and culture. To read more, visit the Future Tense blog and the Future Tense home page. You can also follow us on Twitter.