To believe that the U.S. government planned or deliberately allowed the 9/11 attacks, you’d have to posit that President Bush intentionally sacrificed 3,000 Americans. To believe that explosives, not planes, brought down the buildings, you’d have to imagine an operation large enough to plant the devices without anyone getting caught. To insist that the truth remains hidden, you’d have to assume that everyone who has reviewed the attacks and the events leading up to them—the CIA, the Justice Department, the Federal Aviation Administration, the North American Aerospace Defense Command, the Federal Emergency Management Agency, scientific organizations, peer-reviewed journals, news organizations, the airlines, and local law enforcement agencies in three states—was incompetent, deceived, or part of the cover-up.

And yet, as Slate’s Jeremy Stahl points out, millions of Americans hold these beliefs. In a Zogby poll taken six years ago, only 64 percent of U.S. adults agreed that the attacks “caught US intelligence and military forces off guard.” More than 30 percent chose a different conclusion: that “certain elements in the US government knew the attacks were coming but consciously let them proceed for various political, military, and economic motives,” or that these government elements “actively planned or assisted some aspects of the attacks.”

How can this be? How can so many people, in the name of skepticism, promote so many absurdities?

The answer is that people who suspect conspiracies aren’t really skeptics. Like the rest of us, they’re selective doubters. They favor a worldview, which they uncritically defend. But their worldview isn’t about God, values, freedom, or equality. It’s about the omnipotence of elites.

Conspiracy chatter was once dismissed as mental illness. But the prevalence of such belief, documented in surveys, has forced scholars to take it more seriously. Conspiracy theory psychology is becoming an empirical field with a broader mission: to understand why so many people embrace this way of interpreting history. As you’d expect, distrust turns out to be an important factor. But it’s not the kind of distrust that cultivates critical thinking.

In 1999 a research team headed by Marina Abalakina-Paap, a psychologist at New Mexico State University, published a study of U.S. college students. The students were asked whether they agreed with statements such as “Underground movements threaten the stability of American society” and “People who see conspiracies behind everything are simply imagining things.” The strongest predictor of general belief in conspiracies, the authors found, was “lack of trust.”

But the survey instrument that was used in the experiment to measure “trust” was more social than intellectual. It asked the students, in various ways, whether they believed that most human beings treat others generously, fairly, and sincerely. It measured faith in people, not in propositions. “People low in trust of others are likely to believe that others are colluding against them,” the authors proposed. This sort of distrust, in other words, favors a certain kind of belief. It makes you more susceptible, not less, to claims of conspiracy.

A decade later, a study of British adults yielded similar results. Viren Swami of the University of Westminster, working with two colleagues, found that beliefs in a 9/11 conspiracy were associated with “political cynicism.” He and his collaborators concluded that “conspiracist ideas are predicted by an alienation from mainstream politics and a questioning of received truths.” But the cynicism scale used in the experiment, drawn from a 1975 survey instrument, featured propositions such as “Most politicians are really willing to be truthful to the voters,” and “Almost all politicians will sell out their ideals or break their promises if it will increase their power.” It didn’t measure general wariness. It measured negative beliefs about the establishment.

The common thread between distrust and cynicism, as defined in these experiments, is a perception of bad character. More broadly, it’s a tendency to focus on intention and agency, rather than randomness or causal complexity. In extreme form, it can become paranoia. In mild form, it’s a common weakness known as the fundamental attribution error—ascribing others’ behavior to personality traits and objectives, forgetting the importance of situational factors and chance. Suspicion, imagination, and fantasy are closely related.

The more you see the world this way—full of malice and planning instead of circumstance and coincidence—the more likely you are to accept conspiracy theories of all kinds. Once you buy into the first theory, with its premises of coordination, efficacy, and secrecy, the next seems that much more plausible.

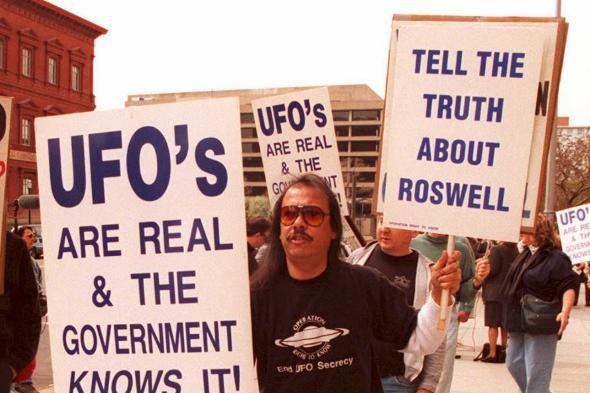

Many studies and surveys have documented this pattern. Several months ago, Public Policy Polling asked 1,200 registered U.S. voters about various popular theories. Fifty-one percent said a larger conspiracy was behind President Kennedy’s assassination; only 25 percent said Lee Harvey Oswald acted alone. Compared with respondents who said Oswald acted alone, those who believed in a larger conspiracy were more likely to embrace other conspiracy theories tested in the poll. They were twice as likely to say that a UFO had crashed in Roswell, N.M., in 1947 (32 to 16 percent) and that the CIA had deliberately spread crack cocaine in U.S. cities (22 to 9 percent). Conversely, compared with respondents who didn’t believe in the Roswell incident, those who did were far more likely to say that a conspiracy had killed JFK (74 to 41 percent), that the CIA had distributed crack (27 to 10 percent), that the government “knowingly allowed” the 9/11 attacks (23 to 7 percent), and that the government adds fluoride to our water for sinister reasons (23 to 2 percent).

The appeal of these theories—the simplification of complex events to human agency and evil—overrides not just their cumulative implausibility (which, perversely, becomes cumulative plausibility as you buy into the premise) but also, in many cases, their incompatibility. Consider the 2003 survey in which Gallup asked 471 Americans about JFK’s death. Thirty-seven percent said the Mafia was involved, 34 percent said the CIA was involved, 18 percent blamed Vice President Johnson, 15 percent blamed the Soviets, and 15 percent blamed the Cubans. If you’re doing the math, you’ve figured out by now that many respondents named more than one culprit. In fact, 21 percent blamed two conspiring groups or individuals, and 12 percent blamed three. The CIA, the Mafia, the Cubans—somehow, they were all in on the plot.

Two years ago, psychologists at the University of Kent led by Michael Wood (who blogs at a delightful website on conspiracy psychology), escalated the challenge. They offered U.K. college students five conspiracy theories about Princess Diana: four in which she was deliberately killed, and one in which she faked her death. In a second experiment, they brought up two more theories: that Osama Bin Laden was still alive (contrary to reports of his death in a U.S. raid earlier that year) and that, alternatively, he was already dead before the raid. Sure enough, “The more participants believed that Princess Diana faked her own death, the more they believed that she was murdered.” And “the more participants believed that Osama Bin Laden was already dead when U.S. special forces raided his compound in Pakistan, the more they believed he is still alive.”

Another research group, led by Swami, fabricated conspiracy theories about Red Bull, the energy drink, and showed them to 281 Austrian and German adults. One statement said that a 23-year-old man had died of cerebral hemorrhage caused by the product. Another said the drink’s inventor “pays 10 million Euros each year to keep food controllers quiet.” A third claimed, “The extract ‘testiculus taurus’ found in Red Bull has unknown side effects.” Participants were asked to quantify their level of agreement with each theory, ranging from 1 (completely false) to 9 (completely true). The average score across all the theories was 3.5 among men and 3.9 among women. According to the authors, “the strongest predictor of belief in the entirely fictitious conspiracy theory was belief in other real-world conspiracy theories.”

Clearly, susceptibility to conspiracy theories isn’t a matter of objectively evaluating evidence. It’s more about alienation. People who fall for such theories don’t trust the government or the media. They aim their scrutiny at the official narrative, not at the alternative explanations. In this respect, they’re not so different from the rest of us. Psychologists and political scientists have repeatedly demonstrated that “when processing pro and con information on an issue, people actively denigrate the information with which they disagree while accepting compatible information almost at face value.” Scholars call this pervasive tendency “motivated skepticism.”

Conspiracy believers are the ultimate motivated skeptics. Their curse is that they apply this selective scrutiny not to the left or right, but to the mainstream. They tell themselves that they’re the ones who see the lies, and the rest of us are sheep. But believing that everybody’s lying is just another kind of gullibility.

Read more in Slate on the 50th anniversary of the JFK assassination.