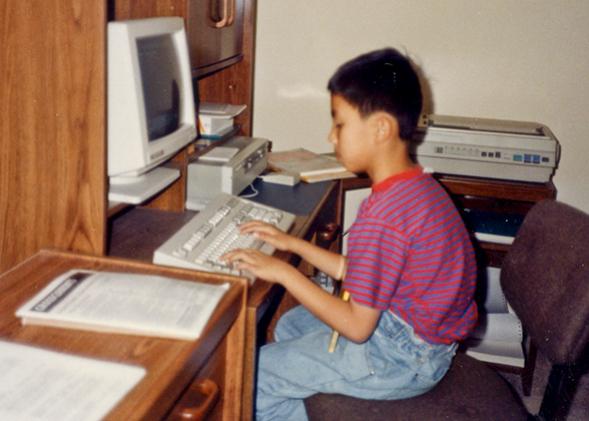

I started programming when I was 5, first with Logo and then BASIC. The picture above is me, age 9 (with horrible posture). By the time this photo was taken, I had already written several BASIC games that I distributed as shareware on our local BBS. I was fast growing bored, so my parents (both software engineers) gave me the original dragon compiler textbook from their grad school days. That’s when I started learning C and writing my own simple interpreters and compilers. My early interpreters were for BASIC, but by the time I entered high school I had already created a self-hosting compiler for a nontrivial subset of C. Throughout most of high school, I spent weekends coding in x86 assembly, obsessed with hand-tuning code for the newly released Pentium II chips. When I started my freshman year at MIT as a computer science major, I already had over 10 years of programming experience. So I felt right at home there.

OK, all of the above was a lie. With one exception: That is me in the photo. When it was taken, I didn’t even know how to touch-type. My parents were just like, “Quick, pose in front of our new computer!” (Look closely. My fingers aren’t even in the right position.) My parents were both humanities majors, and there wasn’t a single programming book in my house. In sixth grade I tried teaching myself BASIC for a few weeks, but quit because it was too hard. The only real exposure I had to programming prior to college was taking AP computer science in 11th grade, taught by a math teacher who had learned the material only a month before class started. Despite its shortcomings, that class inspired me to major in computer science in college. But when I started freshman year at MIT, I felt a bit anxious because many of my classmates actually did have over 10 years of childhood programming experience; I had less than one.

Even though I didn’t grow up in a tech-savvy household and couldn’t code my way out of a paper bag, I had one big thing going for me: I looked like I was good at programming. Here’s me during freshman year of college:

Photo courtesy Philip Guo

As an Asian male student at MIT, I fit society’s image of a young programmer. Thus, throughout college, nobody ever said to me (as they said to some other CS students I knew):

- “Well, you only got into MIT because you’re an Asian boy.”

- (while struggling with a problem set) “Well, not everyone is cut out for computer science; have you considered majoring in bio?”

- (after being assigned to a class project team) “How about you just design the graphics while we handle the backend? It’ll be easier for everyone that way.”

- “Are you sure you know how to do this?”

Although I started off as a complete novice (like everyone once was), I never faced any micro-inequities that impeded my intellectual growth. Throughout college and grad school, I gradually learned more and more via classes, research, and internships, incrementally taking on harder and harder projects, and getting better and better at programming while falling deeper and deeper in love with it. Instead of doing my 10 years of deliberate practice from ages 8 to 18, I did mine from ages 18 to 28. And nobody ever got in the way of my learning—not even inadvertently—probably because I looked like the sort of person who would be good at such things. (The software engineer Tess Rinearson writes about this dynamic from a different perspective in her essay “On Technical Entitlement.”)

Instead of facing implicit bias or stereotype threat, I had the privilege of implicit endorsement. For instance, whenever I attended technical meetings, people would assume that I knew what I was doing (regardless of whether I did or not) and treat me accordingly. If I stared at someone in silence and nodded as they were talking, they would assume that I understood, not that I was clueless. Nobody ever talked down to me, and I always got the benefit of the doubt in technical settings.

As a result, I was able to fake it till I made it, often landing jobs whose postings required skills I hadn’t yet learned but knew that I could pick up on the spot. Most of my interviews for research assistantships and summer internships were quite casual—people just gave me the chance to try. And after enough rounds of practice, I actually did start knowing what I was doing. As I gained experience, I was able to land more meaningful programming jobs, which led to a virtuous cycle of further improvement.

This kind of privilege that I and other people who looked like me possessed was silent, manifested not in what people said to us, but rather in what they didn’t say. We had the privilege to spend enormous amounts of time developing technical expertise without anyone’s interference or implicit discouragement. Sure, we worked really hard, but our efforts directly translated into skill improvements without much loss due to interpersonal friction. Because we looked the part.

In contrast, ask any computer science major who isn’t from a majority demographic (i.e., white or Asian male), and I guarantee that he or she has encountered discouraging comments such as “You know, not everyone is cut out for computer science.” They probably still remember the words and actions that have hurt the most, even though those making the remarks often aren’t trying to harm.

For example, one of my good friends took the Intro to Java course during freshman year and enjoyed it. She wanted to get better at Java GUI programming, so she got a summer research assistantship at the MIT Media Lab. However, instead of letting her build the GUI (like the job ad described), the supervisor assigned her the mind-numbing task of hand-transcribing audio clips all summer long. He assigned a new male student to build the GUI application. And it wasn’t like that student was a programming prodigy—he was also a freshman with the same amount of (limited) experience that she had. The other student spent the summer getting better at GUI programming while she just grinded away mindlessly transcribing audio. As a result, she grew resentful and shied away from learning more CS.

Thinking about this story always angers me. Here was someone with a natural interest who took the initiative to learn more and was denied the opportunity to do so. I have no doubt that my friend could have gotten good at programming—and really enjoyed it—if she had the same opportunities as I did. (It didn’t help that when she was accepted to MIT, her aunt—whose son had been rejected—congratulated her by saying, “Well, you only got into MIT because you’re a girl.”)

Over a decade later, she now does some programming at her research job, but wishes that she had learned more back in college. However, she had such a negative association with everything CS-related that it was hard to motivate herself to do so for fear of being shot down again.

One trite retort is “Well, your friend should’ve been tougher and not given up so easily. If she wanted it badly enough, she should’ve tried again, even knowing that she might face resistance.” These sorts of remarks aggravate me. Writing code for a living isn’t like being a Navy SEAL sharpshooter. Programming is seriously not that demanding, so you shouldn’t need to be a tough-as-nails superhero to enter this profession.

Just look at this photo of me from a software engineering summer internship:

Photo courtesy Philip Guo

Even though I was hacking on a hardware simulator in C++, which sounds mildly hard-core, I was actually pretty squishy, chillin’ in my cubicle and often taking extended lunch breaks. All of the guys around me (yes, the programmers were all men, with the exception of one older woman who didn’t hang out with us) were also fairly squishy. These guys made a fine living and were good at what they did; but they weren’t superheroes. The most hardship that one of the guys faced all summer was staying up late playing Doom 3 and then rolling into the office dead-tired the next morning. Anyone with enough practice and motivation could have done our jobs, and most other programming and CS-related jobs as well. Seriously, companies aren’t looking to hire the next Steve Wozniak—they just want to ship code that works.

It frustrates me that people not in the majority demographic often need to be tough as nails to succeed in this field, constantly bearing the lasting effects of thousands of micro-inequities. Psychology Today notes that according to one researcher, Mary Rowe:

[M]icro-inequities often had serious cumulative, harmful effects, resulting in hostile work environments and continued minority discrimination in public and private workplaces and organizations. What makes micro-inequities particularly problematic is that they consist in micro-messages that are hard to recognize for victims, bystanders and perpetrators alike. When victims of micro-inequities do recognize the micro-messages … it is exceedingly hard to explain to others why these small behaviors can be a huge problem.

In contrast, people who look like me can just kinda do programming for work if we want, or not do it, or switch into it later, or out of it again, or work quietly, or nerd-rant on how Ruby sucks or rocks or whatever, or name-drop monads. And nobody will make remarks about our appearance, about whether we’re truly dedicated hackers, or how our behavior might reflect badly on “our kind” of people. That’s silent technical privilege.

Ideally, we want to spur interest in young people from underrepresented demographics who might never otherwise think to pursue CS or STEM studies. There are great people and organizations working toward this goal. Although I think that increased and broader participation is critical, a more immediate concern is reducing attrition of those already in the field. For instance, according to a 2012 STEM education report to the president:

[E]conomic forecasts point to a need for producing, over the next decade, approximately 1 million more college graduates in STEM fields than expected under current assumptions. Fewer than 40% of students who enter college intending to major in a STEM field complete a STEM degree. Merely increasing the retention of STEM majors from 40% to 50% would generate three quarters of the targeted 1 million additional STEM degrees over the next decade.

That’s why I plan to start by taking steps to encourage and retain those who already want to learn. So here’s a thought experiment: For every white or Asian male expert programmer you know, imagine a parallel universe where they were of another ethnicity and/or gender but had the exact same initial interest and aptitude levels. Would they still have been willing to devote the 10,000-plus hours of deliberate practice to achieve mastery in the face of dozens or hundreds of instances of implicit discouragement they would inevitably encounter over the years? Sure, some super-resilient outliers would, but many wouldn’t. Many of us would quit, even though we had the potential and interest to thrive in this field.

I hope to live in a future where people who already have the interest to pursue CS or programming don’t self-select themselves out of the field. I want those people to experience what I was privileged enough to have gotten in college and beyond: unimpeded opportunities to develop expertise in something that they find beautiful, practical, and fulfilling.

This piece is adapted from Guo’s blog.