Never trust gadget-makers’ claims about battery life. They usually employ weasel words (a machine that alleges to last “up to” six hours between recharges is technically delivering if it runs out of juice in 45 minutes). And they’re racked with fine-print caveats (the battery can last all week if you dim the display to the point of illegibility). That said, you can relax your skepticism just a bit for the new crop of notebook computers coming out this year. Apple’s new 13-inch MacBook Air promises 12 hours between charges—well, “up to” 12 hours, but based on many reviewers’ tests, including my own, it handily manages a full day without a plug. Lots of new Windows-based laptops are offering similarly amazing stats, and I bet many will meet their claims, too. All of a sudden, your days of fighting fellow café patrons for the last available outlet are over.

Why are laptops batteries getting so much better? The nominal reason is the new Intel processor Haswell—the dramatic result of Intel’s yearslong effort to alter its core assumptions about the future of technology. For much of its life, Intel optimized its processors for speed—every year, the chip giant released new ones that were the fastest ever, because it guessed that computers could never get too powerful. For three decades, that bet was correct. Every time Intel’s chips got faster, software makers came up with new uses for them: better games, audio and video editing, faster Web servers. It seemed likely that our thirst for computing power would never be quenched.

But over the past few years, Intel’s assumptions began to unravel. As computers got fast enough for most ordinary uses, speed was no longer a draw—if all you wanted to do was check your email and surf the Web, why would you buy a monster PC? Instead of faster computers, the world began fixating on cameras, music players, smartphones, tablets, e-readers, and tiny wearable machines. These devices weren’t computationally powerful, but they were small, light, thin, and offered great battery life. Intel’s fast, battery-hogging chips just couldn’t work in them.

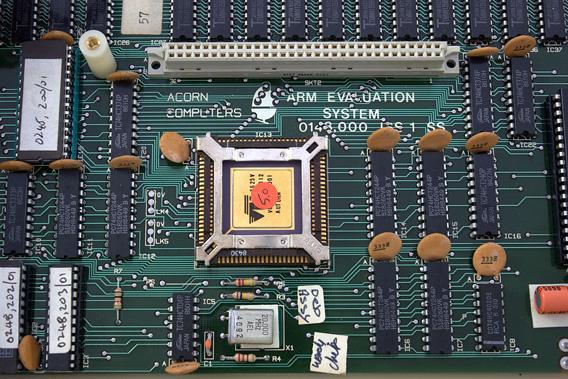

Instead, almost out of the blue, a rival chipmaker came along to fill the void. It wasn’t Apple, Google, Samsung, or any other familiar tech giant. It was ARM, a small British firm that is the most important tech company you’ve probably never heard of. ARM’s chips sit at the heart of almost every tech innovation in the past decade, from the digital camera to the iPod to the iPad to the Kindle to every smartphone worthy of the name. And it’s only due to competition with ARM that Intel created Haswell. When your laptop goes a full day without a charge, you can thank ARM.

If you’ve never heard of ARM, it’s partly because of its stealthiness: None of the products that use ARM’s technology are stamped with its brand. What’s more, compared to the biggest names in tech, ARM is almost comically tiny. In 2012, Intel and Google each made $11 billion in profit. Microsoft made $17 billion, Samsung made $21 billion, and Apple made $41 billion. ARM? In 2012, it made $400 million—less than 1 percent of Apple’s take. (The name, by the way, is pronounced “arm,” not “A-R-M.” The acronym once stood for Advanced RISC Machines—RISC being an acronym for a certain chip-design philosophy—but in 1998, the company decided to drop the double acronym. Now, like KFC, ARM officially stands for nothing.)

Despite its Lilliputian scale, ARM’s processors are in everything. The company claims a 90 percent market share in mobile devices. Ninty percent! You almost certainly own at least one ARM-powered thing, and probably closer to a dozen. There’s an ARM in your phone, your tablet, your car, your camera, your printer, your TV, and your cable box. In 2012, manufacturers shipped 9 billion ARM-powered devices; by 2017, the company predicts that number will rise to 41 billion every year.

You might wonder how this is possible—why does ARM make so little money if its processors are in everything? Think about that question for a minute, though, and you find that it answers itself: ARM’s chips are in everything precisely because it makes so little money. That is, ARM has adopted a business model that, at best, will result in paltry returns for itself—and as result, its chips have flowed everywhere. Indeed, it’s sometimes more useful to think of ARM as a monastic, money-shunning academic outfit rather than a corporation.

By design, the company doesn’t get involved in any of the risky, capital-intensive requirements of processor manufacturing. It doesn’t own chip-fabrication plants, it doesn’t buy silicon, and it doesn’t set up billion-dollar clean rooms. Instead, ARM does a single thing: It designs new processors. It doesn’t make them. Like a Tin Pan Alley songwriter, it licenses its designs to the world. Anyone who wants to make ARM chips can buy the designs and start cranking them out. Depending on the particular licenses they buy, companies can alter ARM’s chips and even rename them however they please, removing any trace of ARM’s work. Apple calls its latest chip the A6, Samsung sells the Exynos, and Qualcomm has the Krait. But deep down, they’ve all got ARM guts. (For an excellent in-depth look at ARM’s business model, read Anand Lal Shimpi’s series on the company.)

ARM’s business model grew out of the particular conditions of its founding. When it was launched in 1990, ARM faced tremendously daunting odds. At the time, Intel was well on its way to becoming a monopoly, so in order to stand out, the company had to think of a completely new way of selling processors. The other problem was that ARM had to make cheap chips meant for cheap machines. But the chip business is risky and expensive—almost all the costs involved are upfront—and it’s hard to make cheap processors from the get-go. ARM’s licensing model is an elegant solution to both problems. By letting other people make its chips, ARM didn’t have to bear any capital risk. By letting other people alter its chips, the company fostered competition that lowered costs: As companies found new ways to improve ARM’s designs and better techniques to produce them, prices would go down.

This is the same model that Google uses to market Android, with one crucial difference: By giving away its mobile operating system, Google expects to create a thriving smartphone industry that will one day help it reap huge rewards from its main business, which is advertising. ARM doesn’t have any such alternative source of revenue. Instead, when it signs up a new licensing partner, it gets a one-time licensing fee and, eventually, a tiny royalty on each device sold. The model essentially caps ARM’s long-term revenue. No matter how good its chip designs get, now matter how ubiquitous ARM-powered devices become, ARM is always going to make only a tiny sliver of the overall profits in the tech business.

But those tiny returns are also a great advantage. Faced with an existential threat posed by ARM, Intel has been rushing to refashion its processors for mobile devices. Soon, in addition to long-lasting laptops, we’ll see phones and tablets running Intel chips. Technically, these processors may work as well as ARM-based designs. But there’s no way that Intel can hope to compete with ARM’s business model. Because so many companies now make ARM chips, there are intense cost pressures in the business for mobile processors—and there’s essentially no profit in the mobile-processor industry.

So to effectively compete with ARM, Intel will have to lower its prices all the way to cost. Another way of saying this is: ARM has already won. And as the beneficiaries of all the cheap, battery-sipping devices powered by its chips, so have we all.