On Jan. 4, 2013, Aaron Swartz woke up in an excellent mood. “He turned to me,” recalls his girlfriend Taren Stinebrickner-Kauffman, “and said, apropos of nothing, ‘This is going to be a great year.’ ”

Swartz had reason to feel optimistic. For a year and a half, he’d been under indictment for wire and computer fraud, a seemingly endless ordeal that had drained his fortune and his emotional reserves. But he had new lawyers, and they were working hard to find common ground with the government. Maybe they’d finally reach an acceptable plea bargain. Maybe they’d go to trial, and win.

“We’re going to win, and I’m going to get to work on all the things I care about again,” Swartz told his girlfriend that day. It’s not that he’d been idle. In addition to his job at the global IT consultancy ThoughtWorks, he’d become a contributing editor to the Baffler, done significant research on how to reform drug policy, and completed about 80 percent of a massive plot summary of the novel Infinite Jest. But Swartz held himself to high standards, and there was always more to do: more books to read, more programs to write, more ways to contribute to the countless projects he’d signed on for.

Swartz and Stinebrickner-Kauffman started 2013 with a Vermont ski vacation. They were joined by the young daughter of Swartz’s ex-girlfriend, tech journalist Quinn Norton. He loved Norton’s daughter more than anyone in the world. Swartz adored children, and he could act like a child himself. A pathologically picky eater, he ate only bland foods: dry Cheerios, white rice, Pizza Hut’s personal pan cheese pizzas. He told friends he was a “supertaster,” extraordinarily sensitive to flavor—as if his taste buds were constantly moving from a dark room into bright light.

Though he called himself an “applied sociologist,” Swartz was best known as a computer programmer. His current project, a piece of software he called Victory Kit, was going well. Victory Kit would be an open-source, free version of the expensive community-organizing software used by groups like MoveOn—the sort of thing grassroots activists from around the world might use.

Some of those activists came to hear Swartz give a presentation on Victory Kit at a conference in upstate New York on Jan. 9. At the last minute, though, Swartz decided not to speak. His friend Ben Wikler says Swartz’s talk depended on someone else committing to join him in making their code open source. When he couldn’t secure that commitment in time, Swartz decided he wasn’t talking. “I remember being annoyed at him for being a stick-in-the-mud,” Wikler says.

Swartz had his principles, and he held to them forcefully. “Aaron generally felt like being a stickler about that stuff made the world better, because it actually pushed people to do the right thing,” says Wikler. He wouldn’t sign any contracts that might encourage patent trolling. He was finicky about his wardrobe, wearing T-shirts whenever possible. “Suits,” he wrote on his blog, “are the physical evidence of power distance, the entrenchment of a particular form of inequality.”

He wasn’t dogmatic about everything. He’d always been opposed to marriage, but he was starting to think he’d gotten that wrong. On Friday, Jan. 11, Stinebrickner-Kauffman stopped over at Wikler’s house. She and Swartz were coming over for dinner later that night, but she came by herself beforehand. As she played with Wikler’s new baby, she mentioned that Swartz had told her that, after the case was resolved, he might consider getting married. If that was possible, anything was possible.

But less than two miles away, in a small and dark studio apartment, Aaron Swartz was already dead.

At the beginning of every year, Aaron Swartz would post an annotated list of everything he’d read in the last 12 months. His list for 2011 included 70 books, 12 of which he identified as “so great my heart leaps at the chance to tell you about them even now.” One of these was Franz Kafka’s The Trial, about a man caught in the cogs of a vast bureaucracy, facing charges and a system that defy logical explanation. “I read it and found it was precisely accurate—every single detail perfectly mirrored my own experience,” Swartz wrote. “This isn’t fiction, but documentary.”

At the time of his death, the 26-year-old Swartz had been pursued by the Department of Justice for two years. He was charged in July 2011 with accessing MIT’s computer network without authorization and using it to download 4.8 million documents from the online database JSTOR. His actions, the government alleged, violated Title 18 of the U.S. Code, and carried a maximum penalty of up to 50 years in jail and $1 million in fines.

The case had sapped Swartz’s finances, his time, and his mental energy and had fostered a sense of extreme isolation. Though his lawyers were working hard to strike a deal, the government’s position was clear: Any plea bargain would have to include at least a few months of jail time.

A prolonged indictment, a hard-line prosecutor, a dead body—these are the facts of the case. They are outnumbered by the questions that Swartz’s family, friends, and supporters are asking a month after his suicide. Why was MIT so adamant about pressing charges? Why was the DOJ so strict? Why did Swartz hang himself with a belt, choosing to end his own life rather than continue to fight?

When you kill yourself, you forfeit the right to control your own story. At rallies, on message boards, and in media coverage, you will hear that Swartz was felled by depression, or that he got caught in a political battle, or that he was a victim of a vindictive state. A memorial in Washington, D.C., this week turned into a battle over Swartz’s legacy, with mourners shouting in disagreement over what policy changes should be enacted to honor his memory.

Aaron Swartz is a difficult puzzle. He was a programmer who resisted the description, a dot-com millionaire who lived in a rented one-room studio. He could be a troublesome collaborator but an effective troubleshooter. He had a talent for making powerful friends, and for driving them away. He had scores of interests, and he indulged them all. In August 2007, he noted on his blog that he’d “signed up to build a comprehensive catalog of every book, write three books of my own (since largely abandoned), consult on a not-for-profit project, help build an encyclopedia of jobs, get a new weblog off the ground, found a startup, mentor two ambitious Google Summer of Code projects (stay tuned), build a Gmail clone, write a new online bookreader, start a career in journalism, appear in a documentary, and research and co-author a paper.” Also, his productivity had been hampered because he’d fallen in love, which “takes a shockingly huge amount of time!”

He was fascinated by large systems, and how an organization’s culture and values could foster innovation or corruption, collaboration or paranoia. Why does one group accept a 14-year-old as an equal partner among professors and professionals while another spends two years pursuing a court case that’s divorced from any sense of proportionality to the alleged crime? How can one sort of organization develop a young man like Aaron Swartz, and how can another destroy him?

Swartz believed in collaborating to make software and organizations and government work better, and his early experiences online showed him that such things were possible. But he was better at starting things than he was at finishing them. He saw obstacles as clearly as he saw opportunity, and those obstacles often defeated him. Now, in death, his refusal to compromise has taken on a new cast. He was an idealist, and his many projects—finished and unfinished—are a testament to the barriers he broke down and the ones he pushed against. This is Aaron Swartz’s legacy: When he thought something was broken, he tried to fix it. When he failed, he tried to fix something else.

Eight or nine months before he died, Swartz became fixated on Infinite Jest, David Foster Wallace’s massive, byzantine novel. Swartz believed he could unwind the book’s threads and assemble them into a coherent, easily parsed whole. This was a hard problem, but he thought it could be solved. As his friend Seth Schoen wrote after his death, Swartz believed it was possible to “fix the world mainly by carefully explaining it to people.”

It wasn’t that Swartz was smarter than everyone else, says Taren Stinebrickner-Kauffman—he just asked better questions. In project after project, he would probe and tinker until he’d teased out the answers he was looking for. But in the end, he was faced with a problem he couldn’t solve, a system that didn’t make sense.

Aaron Swartz was born in November 1986. The oldest of three brothers, he grew up in Highland Park, Ill., a wealthy suburb 23 miles north of Chicago, in a big, old Tudor Revival-style house on a wooded lot within walking distance of the grounds of the Ravinia summer music festival. His father, Robert, was a computer consultant, while his mother was a homemaker who loved knitting and beading. His grandfather, William Swartz, was the chairman of a sign company who was involved with Pugwash, a disarmament organization that won the Nobel Peace Prize in 1995. “The notion of trying to do good and make the world a better place suffused the way we looked at things,” says Robert Swartz. “The notion of not being interested in things, money, acquisitions is the way we looked at the world.”

Aaron was a precocious child, and an early reader. At 3 years old, his father recalls, he read a note on the refrigerator and then turned to his flabbergasted mother, asking, “What’s this free family entertainment in downtown Highland Park?” When Aaron was of school age, he entered the North Shore Country Day School, a picturesque private school in Winnetka, Ill. The school was 6 miles from his house, which made it difficult for friends to come over and play. Aaron, though, found ways to entertain himself—playing the piano, reading books, joking with his brothers.

From a young age, he was adept with machines and fascinated by puzzles. “When he was little he became interested in magic squares—squares in 3-by-3, where in all directions the numbers in the squares add up to the same number,” recalls Robert Swartz. “And so I suggested that he write a program to find magic squares, which I gave him a little bit of help with. But then once he knew how to program he picked it up relatively quickly. ”

The Swartz family was one of the first in the area to have Internet access, before the heyday of graphical browsers. Aaron had computers early on, and he would tote a huge laptop from class to class. He built websites for himself (aaronsw.com), for his family (swartzfam.com), for people interested in “the up and down world of the case against Microsoft” (Redmond Justice), for those who wanted to convert text to ASCII binary and back again (Binary Translator), and for the Star Wars fan club Chicago Force. “He came to a Jedi Council (what we called our board of directors) meeting once when his parents dropped him off,” remembers Phillip Salomon, a group member. His early AIM screen name was “Jedi of Pi.”

Around that time, Aaron started to get bored with school. “He’d come to me and ask for things to read,” says Robert Ryshke, the head of NSCDS’s upper school from 2000 to 2005. “Aaron was an unusual character in the sense that, with an adult like me, the principal of the school, he was very assertive. He’d come to my office, schedule an appointment, open up his computer, and say, ‘I’ve been thinking. Do you have a book in this area?’ ”

Swartz felt strongly that North Shore Country Day didn’t meet his (or any other student’s) needs. In the summer of 2000, just before he started ninth grade, he launched a blog called Schoolyard Subversion. “Seriously, who really cares how long the Nile river is, or who was the first to discover cheese,” he wrote. “How is memorizing that ever going to help anyone? Instead, we need to give kids projects that allow them to exercise their minds and discover things for themselves. Instead of stuffing them with ‘knowledge’ we need to give them the power to find out what they want to know.”

Though Swartz was physically small, preternaturally intelligent, and iconoclastic, he wasn’t socially isolated. He had friends, and competed in Science Olympiad and on sports teams. Class videos he shot around this time reveal a kid with a goofy sense of humor (one of these, a six-minute take of a sock puppet delivering the weather, earned him an F, he notes, because it lacked “hard facts” about weather) and an easy rapport with his classmates—or, at least, the ones who co-starred in a loopy 11-minute “telenovela” that ends with a sombrero-wearing Swartz being gunned down by a boy in a purple football jersey. (“Ay, yi, mi muerto! Mato! Mato!” he yells, passionately if not quite grammatically.)

Swartz’s disaffection had less to do with his peers than with the larger concept of organized education. At first, he was determined to reform things from within, and his blog brimmed with optimism about mobile schools and inspirational teachers and a “big meeting” in which Swartz referred Ryshke, the head of the upper school, to books about education reform.

Eventually, his reformist ardor cooled, and Swartz focused on devising an exit strategy. This was the beginning of a lifelong pattern of impatience with unpleasant situations. Instead of trying to adapt to what he believed were rigid, broken institutions, Swartz would try to make those institutions adapt to him. And if they didn’t change, he would leave.

“We talked about going early to college, going the child prodigy route, but I think he wasn’t interested in taking English and taking pre-calculus,” says Ryshke. “For that whole year, I probably met with him and his parents four or five times, easily. And they were gracious. They obviously wanted to support their son; they didn’t want him to do something that was potentially not going help his future. But, in the end, he was motivated. He was motivated to do something different.”

Swartz blogged about closing his ninth-grade year by airing his grievances at a school assembly:

I stood up in front of the entire high school, swallowed hard, and read:

Everyday, millions of innocent children are unwillingly part of a terrible dictatorship. The government takes them away from their families and brings them to cramped, crowded buildings where they are treated as slaves in terrible conditions. For seven hours a day, they are indoctrinated to love their current conditions and support their government and society. As if this was not enough, they are often held for another two hours to exert themselves almost to the point of physical exhaustion, and sometimes injury. Then, when at home, during the short few hours which they are permitted to see their families they are forced to do additional mind-numbing work which they finish and return the following day.

This isn’t some repressive government in some far-off country. It’s happening right here: we call it school.

Neither Robert Ryshke nor other NSCDS sources could remember whether this actually happened. (“It would not be unlike Aaron to have done this,” Ryshke acknowledges.) Regardless, the story reflects his belief that high school was a malevolent enemy that needed to be vanquished. No matter what anyone else thought or said, Swartz knew he was making the right decision. (“He said he had tried to convince a lot of his classmates to drop out with him, and he hadn’t succeeded with any of them,” says Taren Stinebrickner-Kauffman, describing Swartz’s memories of that time. “I can only imagine what their parents were thinking.”) In the summer of 2001, at age 14, Swartz withdrew from North Shore Country Day.

While Swartz struggled to make his way in the offline world, his online life was thriving. He’d accompanied his father on a business trip to MIT, where he sat in on a lecture by Philip Greenspun, a professor and open-source-software advocate. Greenspun had a company called ArsDigita, which sponsored a contest in which teenagers competed to build useful, non-commercial websites. Swartz entered the contest in 2000 and was honored as a finalist for his entry, The Info Network, an encyclopedia that anyone could edit. (This was months before Wikipedia launched in 2001.)

“Getting ‘real information’ to people on the World Wide Web is 13-year-old Aaron Swartz’s job. He’s tired of all the banner ads, the sponsorships and other miscellaneous ‘junk’ hogging the screens,” explained the Chicago Tribune in a June 2000 article about Swartz’s contest entry. “That’s not what the Internet was made for. It was based on open standards and freedom, not ads,” Swartz told the Tribune. It didn’t matter that Swartz’s friends and family were the only ones that used The Info Network, or that, for some reason, its highest-rated entries tended to concern Chicago Cubs benchwarmers like Shane Andrews and Jeff Reed. At an early age, he’d discovered what he loved to do: find information, organize it, and share it with anyone who cared to look.

The Web also enabled Swartz to thrive socially. “I have developed my most meaningful relationships online,” the 14-year-old Swartz wrote in April 2001. “None of them live within driving distance. None of them are about my own age. Even among those who I would not count as ‘friends,’ I have met many people online who have simply commented on my work or are interested by what I do. Through the Internet, I’ve developed a strong social network—something I could never do if I had to keep my choice of peers within school grounds.”

He connected with his online network via his blog, which he started in early 2002 and which quickly became popular among the hard-core tech set. Swartz was a good, clear writer even as a teenager, opinionated and prolific. If he often came across as aggressive or disdainful, it was likely because he was trying to compensate for his youth—which, early on, was something he was always conscious of, and which he didn’t advertise. Wes Felter, a researcher and blogger who knew him at the time, says Swartz would reject “bland arguments” that he was just a kid and didn’t have enough experience to be spouting off his opinions. “He would fight back and say, ‘No, I believe in a certain thing, and unless you actually provide a good argument, I’m not going to change my mind.’ ”

Many of Swartz’s correspondents were involved in the Semantic Web movement, a push to structure the online world so it would be easier for computers to parse. The dot-com boom had prompted tremendous, rapid growth, and the Web was like a city with no zoning ordinances. The World Wide Web Consortium (W3C), an organization created by World Wide Web inventor Tim Berners-Lee in 1994, pressed to standardize the Internet—to ensure that pages would display properly, and that the transmission and retrieval of information would keep getting better, not worse.

The W3C did a lot of this work through mailing lists. On these email chains, participants hashed out coding questions, debated standards, and laid the groundwork for the future of the Internet. Though the lists were primarily used by academics and professional programmers, anyone could participate. “There was a tradition started in the early days of the Internet. It was a meritocracy,” says Brewster Kahle, founder of the Internet Archive. “So if you are talented, and help, then you’re a first-class member of whatever group you want to be a part of.”

Aaron Swartz, then 13, posted his first message to the rdfweb-dev group on Aug. 21, 2000: “Hello everyone, I’m Aaron. I’m not _that_ much of a coder, (and I don’t know much Perl) but I do think what you’re doing is pretty cool, so I thought I’d hang out here and follow along (and probably pester a bit).”

Lots of kids are obsessed with trains. Not as many are passionate about tracks and switches and signals. But even then, Swartz was fascinated by the foundational inadequacies that kept large systems from working as smoothly as they could. This was precisely the stuff that the academics at W3C cared about. Swartz fit right in.

Around this time, Swartz also joined a working group devoted to RSS. The news-reading technology had originally been developed by Netscape, but the company lost interest in maintaining it, leading online tinkerers to pick up the slack. As of 2000, two groups of programmers wanted to take RSS in two different directions. One of them was a group affiliated with the Semantic Web, which moved to rewrite the standard to include metadata that was easier to parse. Swartz aligned himself with this crowd, and helped work on a standard called RSS 1.0.

Many obituaries have stated that Swartz created or co-created RSS. He didn’t. Swartz was part a splinter group that created a version of RSS that relatively few people used. Nevertheless, his contributions were real, and valued, and his collaborators were inevitably surprised when they learned Swartz was a teenager.

“You meet these people in text originally,” remembers Dan Connolly, a software engineer who spent 15 years affiliated with W3C. “The guy’s writing code, making intelligent comments; as far as you know, he’s your peer. Then you find out he’s 14, and you’re like, ‘Oh!’ ”

In December 2001, Aaron Swartz took to his blog to describe an unusual dream:

I walked in, not quite knowing what to expect. It was a modernly-designed loft with sleeping quarters, a meeting room, ping-pong tables and computers spread around. Windows were everywhere and light streamed making the place feel airy and bright. Many of my friends from the Internet were there, as well as a number of people I didn’t know, but who seemed very friendly. We were all about the same age.

We were working together on a project that we thought would change the world. We were committed to it, and worked well as a team: we helped each other out with what needed to be done, and kept each other’s enthusiasm up. We worked hard, but we also took time off to play ping-pong or go water-sliding. There was a bulletin board to coordinate events as we tended to keep irregular hours.

The team was rather large, but I got to know everyone on it well and we became good friends. We all worked to support each other, and everything was run democratically. New folks who wanted to help were invited in, but the group had to agree on them before they could join.

We learned all the time, both finding out the skills we needed to know ourselves, and teaching each other how to improve. While we knew there was a lot to be done, we focused on our one project with a single-minded determination, finishing it and solving all the problems that it raised.

Whether he knew it or not, that dream world he was describing really existed, minus the water slides, and Swartz would become one of its most important heirs.

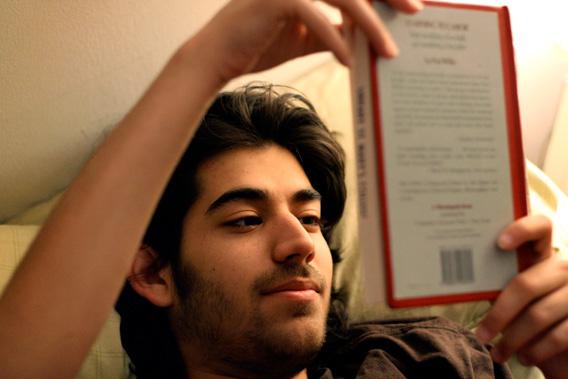

There is a photograph of a teenaged Aaron Swartz sitting on a bench, wearing a “GNU’s Not Unix” T-shirt, and chatting with law professor Lawrence Lessig. This is a family picture of sorts, a snapshot of three generations of data idealists.

Swartz’s T-shirt came from a group called the Free Software Foundation, and the slogan refers to the operating system the foundation created. GNU/Linux (GNU stands for “GNU’s Not Unix”) is a free, community-generated alternative to the Unix operating system, conceived in the 1980s by a software developer named Richard Stallman.

In the 1970s, Stallman was one of many programmers affiliated with MIT’s Artificial Intelligence Lab. As Steven Levy tells it in Hackers, his history of the early days of modern digital computing, the AI Lab was a sort of programming utopia that drew computer enthusiasts from all around—a Brook Farm for the digital age. It was a flat, non-hierarchical system, where you were judged on the caliber of your work, not age or status or title or educational background. “There were people that were hangers on, that were not real students or staff members. They just were there, and they were helping,” says Brewster Kahle, an AI Lab affiliate in the early 1980s. “And that openness was very creative and wonderful.”

Stallman and his fellow hackers wrote and maintained the software that was central to the lab’s research. But in many ways, this was less a workplace than a political awakening. “Hackers spoke openly about changing the world through software, and Stallman learned the instinctual hacker disdain for any obstacle that prevented a hacker from fulfilling this noble cause,” writes Sam Williams in Free as in Freedom, his 2002 biography of Stallman. “Chief among these obstacles were poor software, academic bureaucracy, and selfish behavior.”

Steven Levy calls Stallman “the last of the true hackers”—of everyone affiliated with the AI Lab, he was the one who most avidly ordered his life around the group’s ethic. While other affiliates moved on to private industry and occasionally to great fortune, Stallman never lost his radical idealism. (In this way, he is sort of the Ian MacKaye of computing.) Stallman, who still keeps an office in what MIT now calls its Computer Science and Artificial Intelligence Laboratory, founded the Free Software Foundation in the mid-’80s and remains its president today. The organization is dedicated to the proposition that free software (“free as in freedom, not free as in beer”) is a moral imperative.

Swartz didn’t know Stallman personally, but he was inspired by the programmer’s morals, and by the fact that he’d fostered an organization that took ethics seriously while also getting things done. In 2002, Swartz saw Stallman speak at the O’Reilly Open Source Convention. “The most interesting thing I learned … was how human Stallman is,” Swartz wrote afterward. “As people asked him long questions he would practice his dance steps. He’d make jokes about everything. I could really see being him.”

At that same conference in 2002, the keynote address was delivered by Lawrence Lessig—the Richard Stallman of the copyright movement. Lessig, then at Stanford and now at Harvard, has written prolifically about inequities in U.S. copyright law. Corporate interests pressure Congress to keep extending the scope and duration of copyright protections, Lessig believes, keeping material out of the public domain and stifling the kind of innovation that arises from sharing and remixing. In the introduction to his 2004 book Free Culture, Lessig notes that “all of the theoretical insights I develop here are insights Stallman described decades ago.”

In 2001, Lessig came up with his own variation on Stallman’s Free Software Foundation, an organization he’d call Creative Commons. Lessig wanted to reform copyright laws by giving content creators more options for licensing their work—allowing them to specify, for instance, that anyone could use a photo non-commercially, or that people could adapt it without having to ask permission.

Lessig hired Lisa Rein, a writer and archivist, to help create the Creative Commons licensing metadata.* She in turn suggested that Swartz, whom she knew from the Semantic Web community, should be the one to supervise the site’s metadata implementation. “I won’t lie and say it wasn’t hard at the time to convince these people that I needed this 14-year-old on the project,” she remembers. “I took a pretty big hit for it politically at the time. Until they met him. It didn’t make sense to anybody until they met him.”

Ben Adida, a contractor on the project, remembers Swartz as an active, important participant, “an extremely talented software engineer who just happens to be 14 and wearing a T-shirt that’s three sizes too big for him.” The two worked closely on the metadata implementation, and not always harmoniously. “He had incredibly high standards and we were not meeting them,” remembers Adida. “He was very critical of my work and he said so publicly. The guy was pretty hard on me.”

Swartz expected excellence from those around him, but he also cared deeply about connecting with his new colleagues. In April 2002, he flew to San Francisco for a Creative Commons event, and Rein chaperoned him around the city. She saw it as her role to ensure he met the right people. “I sort of decided he was going to be [either] a good superhero or a super-villain,” she says. Rein introduced him to everyone she knew in the open-access world, people who would become his friends and collaborators. For Swartz, it was an eye-opening, you-are-not-alone experience.

“I know that Aaron spent a lot of his early life really struggling with the fact that he was really interested in and curious about a lot of things that were not interesting to other people,” says Seth Schoen, a technologist with the Electronic Frontier Foundation. Schoen met Swartz in 2002, when he was 23 and his soon-to-be friend was 15.* “I think a lot of the people he regarded as his peers and who regarded him as a peer were at least 10 years older than he was and often much more. And he felt that that was where the action was, where his interests were.”

Swartz had found his people, and he’d met them in the flesh, not just as names on the To: line of a mailing list. From 2002 to 2004, he spent a lot of time in their company, attending and speaking at numerous conferences about emerging technologies and tech issues. (When he was at home, he spent a lot of time fighting with his brothers and parents about who got to use the family Segway.)

Despite his youth, Swartz wasn’t treated like some adorable tech-world mascot. “I mean, socially, it was a lot of nerdy people there,” remembers Wes Felter. “It wasn’t a really sophisticated scene, honestly. … He was obviously less mature than other people there, but not by a wide margin.” Days were spent listening to speeches; after-dinner activities might include “a trip to the Apple Store to check out the then-new iMac, the one that looked like a tablet attached to a globe,” as Joey “Accordion Guy” deVilla put it in a recent online remembrance.

By 2004, Swartz had helped launch Creative Commons, worked on the RSS 1.0 standard, created and maintained a popular blog, and had a hand in countless other large and small projects. But he was also about to turn 18, and regardless of his suspicions about organized schooling, he was expected—by his parents and most everyone else—to go to college. In the summer of 2004, he enrolled at Stanford University.

For Swartz, Stanford felt less like the dream world of Creative Commons and more like a return to everything he hated about high school. By his first week in Palo Alto, he’d blogged that “it doesn’t strike me that most Stanford students (and professors) are exceptionally bright.”

College was not an intellectual dream world—it was just another place that needed fixing. “If I wanted to start a more effective university, it would be pretty simple,” he wrote on his third day at Stanford. “Hire the smartest people and accept the smartest students, get them to work on projects that interest them … organize a bunch of show-and-tells and mixers, and for the most part let them figure stuff out on their own.”

Swartz lived in Roble Hall, in a suite with three other people. “He was a pretty introverted guy, I was a pretty introverted guy,” remembers his roommate, Rondy Lazaro. “A few people in his dorm knew him for his work in computer programming. To them he was the Aaron Swartz.” To most people, though, he was just the guy at the end of the hall with the recumbent bicycle and filing cabinet.

Swartz studied sociology. “The other night, when [redacted] asked me why I switched from computer science to sociology, I said it was because Computer Science was hard and I wasn’t really good at it, which really isn’t true at all,” he wrote on his blog. “The real reason is because I want to save the world.”

In several posts, he chronicled his Stanford experience like an anthropologist taking field notes. He wrote about campus groupthink and critiqued his classes. Occasionally, his loneliness peeks through:

Stanford: Day 58

Kat and Vicky want to know why I eat breakfast alone reading a book, instead of talking to them. I explain to them that however nice and interesting they are, the book is written by an intelligent expert and filled with novel facts. They explain to me that not sitting with someone you know is a major social faux pas and not having a need to talk to people is just downright abnormal.

I patiently suggest that perhaps it is they who are abnormal. After all, I can talk to people if I like but they are unable to be alone. They patiently suggest that I am being offensive and best watch myself if I don’t want to alienate the few remaining people who still talk to me.

Swartz may not be the most reliable narrator of his college experience. Multiple people who knew him at Stanford say he wasn’t a complete loner, and that he was always ready to have a conversation. But the blog—which he chose not to share with his fellow students—is not the work of someone who’s reveling in undergrad life. His classes were insufficiently engaging and challenging, and he pined for a girl he called TGIQ (for “The Girl in Question”) who always manages to “disappear before I can catch up with her.” Instead of chatting up his fellow freshmen, he haunted the office hours of professors like Lessig. “It’s fun, although it bothers me to bother them,” he wrote.

Worst of all was that his fellow students didn’t think the way he thought. Before he’d met the Creative Commons crew, he’d struggled to find peers he could relate to. “I remember he was really hoping that that’d change when he went to Stanford,” says Seth Schoen. “And I visited him there and he basically said that it hadn’t—that even the other Stanford undergrads around him weren’t curious about the things he was curious about.”

It’s probably more that they weren’t curious in the same way he was curious—that they accepted Stanford at face value and got the most out of it they could rather than questioning everything it stood for. Still, he tried to make Stanford work. In 2005, he helped launch the Roosevelt Institute Campus Initiative, a group pushing to get college students involved in politics. But he became increasingly disengaged from school, spending more time off campus with people who worked in political and data activism. He also supplemented his studies with a lot of outside reading. “He read more fiction as an 18-year-old computer genius than I read [now] as a creative writing grad student,” remembers Kat Lewin, one of Swartz’s dorm-mates.

The summer before he entered Stanford, Swartz read two books that changed his worldview. Moral Mazes, which he would later call his all-time favorite book, is an ethnographic study of American corporate managerial culture. In it, Robert Jackall examines the institutional logic of the corporate world, and explains how diffused responsibility and organizational insularity create a culture that rewards managers for doing the wrong thing. In Understanding Power, which treads similar ground, linguist and political activist Noam Chomsky expounds on how power structures lead good people to do horrible things.

Both books are indictments of bureaucracies—of how giant organizations harm outsiders who come into contact with them and those insiders who refuse to play the game. For someone like Swartz, predisposed to resist feeling like a cog in a machine, this was the intellectual justification he needed to kiss off the industrial education complex. Like North Shore Country Day School before it, Stanford never stood a chance.

Swartz took the first out he could find. It came courtesy of essayist and entrepreneur Paul Graham, who’d founded a company called Y Combinator in 2005. Graham, who made millions selling his company Viaweb to Yahoo, believed that smart and restless talents like Swartz ought to quit school and start building things. He invited budding entrepreneurs to send him proposals for tech startups; he’d pick the ones he liked and bring the founders to Cambridge, Mass., for a summer’s worth of bootstrapping.

Swartz pitched Graham something called Infogami, a platform that would help people build structured, data-driven, content-rich websites. It was a logical conceptual progression from the Semantic Web projects Swartz had been steeped in, and it became one of eight businesses to be funded that year. Not long after Mark Zuckerberg left Harvard to marinate in Palo Alto’s start-up culture, Swartz made the opposite move, ditching California after just one year to build a startup on the East Coast. His college career was over.

Infogami flopped—there’s no other way to say it. Swartz had no experience building something this ambitious from the ground up. He had trouble attracting investors, as he struggled to articulate the specific problem that Infogami was supposed to solve. And Swartz and his roommate/collaborator, a young Danish programmer named Simon Carstensen, did not make great partners. When Carstensen arrived at the small, stuffy MIT dorm room that they’d share that summer, it was the first time the two men had ever met. “I think my memory is sitting in this dormitory and coding and it being very hot,” Carstensen recalls.

Swartz rewrote a lot of Carstensen’s code, and at the end of the summer, the Dane returned to Europe and didn’t work on Infogami again. Swartz stuck around Boston, but he started to lose patience with his startup. He was alone, staying at Paul Graham’s house, and he was miserable:

One Sunday I decided I’d finally had enough of it [Infogami]. I went to talk to Paul Graham, the only person who had kept me going through these months. “This is it,” I told him. “If I don’t get either funding, a partner, or an apartment by the end of this week, I’m giving up.” Paul did his best to talk me out of it and come up with solutions, but I still couldn’t see any way out.

The next night I had dinner with Paul and his friends. They noted my birthday was tomorrow and asked me what I wanted. I thought for a moment about what I wanted most. “A cofounder,” I finally said. We all laughed.

Around the same time, two other Y Combinator participants desperately needed help for their own startup, a social news site called Reddit. Graham’s solution was simple: Infogami would merge with Reddit, creating a new umbrella company called Not a Bug. Swartz moved into Alexis Ohanian and Steve Huffman’s Davis Square apartment and got to work.

The story of Swartz’s time at Reddit is a complicated one. Swartz wasn’t involved in conceptualizing Reddit; when he came aboard, the project was already on its feet. While Ohanian and Huffman didn’t consider him a co-founder, Swartz often employed the term.

What can be said definitively is that Swartz was unhappy at Reddit, and the Reddit crew was unhappy with him. The original plan was for Huffman to help Swartz with Infogami while Swartz helped with Reddit, and for both projects to run off of a common back end that the two of them would build together. Huffman was excited to work with Swartz, whom he considered a talented programmer. “There was a time for a couple of months when we were like, OK, we’re gonna start this new thing and it’s gonna be bigger than Reddit, bigger than Infogami,” says Huffman. “And then it became pretty clear a few months in that that was not gonna be the case.”

The Infogami launch didn’t go well, and Swartz became demoralized. “He got sick of working on Reddit, and I never wanted to work on Infogami at all,” says Huffman. “That was basically the beginning of the end.”

For months, Swartz did no work on Reddit, though he continued to live with Huffman and Ohanian. His fitful mind focused on other things, including an unsuccessful run for a position on the Wikimedia Foundation’s board of directors. And, as he’d done during his lowest periods in high school and college, he vented his frustrations on his blog. “I don’t want to be a programmer,” he wrote in May 2006, when he was 19. “When I look at programming books, I am more tempted to mock them than to read them. When I go to programmer conferences, I’d rather skip out and talk politics than programming. And writing code, although it can be enjoyable, is hardly something I want to spend my life doing.”

Swartz’s quest for personal fulfillment made for an unpleasant work environment. With Huffman serving as Reddit’s sole full-time engineer, it was unclear whether the site would succeed. But in October 2006, 16 months after Reddit was founded, Condé Nast purchased the company for an undisclosed sum, reportedly somewhere between $10 million and $20 million. Some of that money went to Graham and some went to Chris Slowe, a part-time programmer who had equity in the company. Swartz, Huffman, and Ohanian split the rest three ways. (Swartz gave some of his money to Simon Carstensen, as a reward for his early work on Infogami.)

As part of the deal, the Reddit team moved from Boston to San Francisco; the openly unhappy Swartz was not expected to go with them. But for some reason—be it masochism, a sense of duty, or a belief that a change of scenery might make things better—he decided to move back west.

The Reddit staff worked out of the Wired office, in a corner that had been cleared especially for them. This was a physical environment very similar to the idealized “modernly-designed loft” the teenage Swartz had dreamed about in 2001—a big, open space with a personal chef who made everyone breakfast. But Swartz was predictably miserable, and not at all suited to corporate life. Every meeting, every banality must have seemed like a moral maze. He stopped showing up at the office, wrote blog posts critical of his co-workers, took impromptu trips to Europe, and did very little work. Once again, he moved to separate himself from an environment that failed to live up to his ideals. This time, though, he didn’t get to leave on his own terms. Less than three months after the Condé Nast acquisition went through, Ohanian and Huffman asked Swartz to leave.

Around the same time he was fired from Reddit, Swartz wrote a long story on his blog about a man who starved himself, then committed suicide.

The day Alex killed himself, he was awoken by pains, worse than ever. He rolled back-and-forth in bed as the sun came up, the light streaming through the windows eliminating the chance for any further sleep. At 9, he was startled by a phone call. The pains subsided, as if quieting down to better hear what the phone might say.

It was his boss. He had not been to work all week. He had been fired. Alex tried to explain himself, but couldn’t find the words. He hung up the phone instead.

The day Alex killed himself, he wandered his apartment in a daze.

“Alex” was originally named “Aaron.” (Swartz took the post down for a time, then changed the character’s name when he reposted it.) Alexis Ohanian, whose then-girlfriend had attempted suicide less than a year earlier, called the police and had them check on Swartz, who was fine. He later explained away the blog post as a misunderstood attempt at fiction: “I was deathly ill when I came back from Europe; I spent a week basically lying in bed clutching my stomach. I wrote a morose blog post in an attempt to cheer myself up about a guy who died. (Writing cheers me up and the only thing I could write in that frame of mind was going to be morose.)”

It’s impossible to say whether this post was a melodramatic story, a cry for help, or a bit of morbid foreshadowing. At the time, though, it served as a dramatic and definitive end to an unsatisfying chapter in his life. Swartz was 20 and jobless. But now, at least, he felt like he had control of his life again.

Sooner or later, every true believer gets the opportunity to sell out—to put money or comfort or expediency ahead of what he claims to value most. In the late 1970s, the MIT AI Lab was falling apart. Several programmers had left to form their own company, Symbolics, which built and sold Lisp machines and the software that was used to run them. This was the same computer language that, up until then, the hackers at the AI Lab had been developing for free. Stallman saw this as a tremendous betrayal and fashioned himself into the last bulwark of idealism in an environment where it once flourished. The last of the true hackers performed incredible feats of programming to match—by himself—every Symbolics software update, ensuring that the free version would be just as good as the one you had to pay for. Soon thereafter, Stallman left the AI Lab to start the Free Software Foundation, and make idealism his life’s work.

Swartz’s separation from Reddit, though not as drawn out and openly adversarial as the Stallman/Symbolics fight, was similarly ideological. Though he didn’t articulate it at the time, it’s clear in hindsight that Swartz hated the feeling of doing something for the money. After the Condé Nast sale went through, he described his guilt over being paid so much for a project that he believed was so insignificant:

Once I went far outside the city to have lunch with an author I respected. He asked about what I did, wanted me to explain it in great detail. He asked how many visitors we had. I told him and he sputtered. “I’ve spent fifteen years building an audience, and you’re telling me in a year you have a million visitors?” I assented.

Puzzled, he insisted I show him the site on his own computer, but he found it was just as simple as I described. (Simpler, even.) “So it’s just a list of links?” he said. “And you don’t even write them yourselves?” I nodded. “But there’s nothing to it!” he insisted. “Why is it so popular?”

Inside the bubble, nobody asks this inconvenient question. We just mumble things like “democratic news” or “social bookmarking” and everybody just assumes it all makes sense. But looking at this guy, I realized I had no actual justification. It was just a list of links. And we didn’t even write them ourselves.

Leaving Reddit led Swartz to reassess his life. Any single project might turn out to be a slog or a bore or a gigantic pain in the ass. The solution to that problem was to sign up for “all the interesting projects that came my way.” He worked on another startup, Jottit, with his old Infogami partner Simon Carstensen. He also helped the Internet Archive’s Brewster Kahle launch Open Library, an ambitious effort to create a landing page for every book in existence.

Increasingly, the projects that interested him were at the intersection of data and governance. Swartz had grown up in a family where activism was valued, and his early work with Creative Commons gave him a cause of his own. In October 2002, the 15-year-old Swartz went to the Supreme Court as Lawrence Lessig’s guest. The law professor was serving as plaintiff’s counsel in Eldred v. Ashcroft, a key case in modern copyright law. Lessig argued that the court should overturn the Sonny Bono Copyright Term Extension Act, which extended copyright protections significantly. Though Lessig lost the case, the experience was formative for Swartz—he was an ardent copyright reformist for the rest of his life.

In the intervening years, both Lessig and Swartz became more explicitly political. In 2008, the law professor co-founded an organization called Change Congress, which urged citizens to do just that. Swartz eagerly signed on, joining the group’s board. The project sparked an interest in electoral politics. Swartz reached out to friends in Washington, polling them about how the system worked, and how it could work better. “If you talk to people in Silicon Valley, they often say that people in politics are stupid,” notes Matt Stoller, one of the people Swartz contacted. “Aaron didn’t think that way. Aaron thought people in politics are people, and they operate in a system, just like Silicon Valley is a system. And you have to learn these systems if you want to manipulate the process.”

Swartz soon realized that his talent for accessing and synthesizing information could have political value—that he was a better programmer and data gatherer than most other activists, and more ardent in his activism than most software aces. Swartz helped found a group called the Progressive Change Campaign Committee, which was devoted to electing progressive candidates to Congress. He got a Sunlight Network grant to start a site called Watchdog.net, which gathered information about voting records and campaign finance and gave its users tools to manipulate and present that data themselves.

But Swartz soon had his eyes on a larger prize: PACER, the electronic storehouse for federal court records. PACER’s contents are all public records, and anyone can access them via the Web for a small fee (now 10 cents per page). An open-government activist named Carl Malamud argued that these documents shouldn’t cost a dime, as they’re government products and thus non-copyrightable. In 2008, Malamud put out a call for like-minded folk to grab as much from PACER as possible during a trial period in which documents would be downloadable for free at select libraries.

This call for action held a natural appeal to Swartz, an avowed enemy of copyright restrictions and supporter of open data. In September 2008, he went to a library in Chicago and installed a Perl script that pulled a new document from PACER every three seconds. Before the library caught on and shut him down, he’d downloaded 19,856,160 pages, which he donated to Malamud’s open-government site Public.Resource.Org.

For Swartz, the decision to install that Perl script represented a subtle but dramatic shift in his ideology and methods. He had always believed that information wants to be free. Now, he had acted as its liberator. He’d also chosen to tweak the federal government, actively mocking a system that was resistant to change.

Though they’d done nothing illegal, the PACER stunt earned Swartz and Malamud some FBI attention. Swartz later acquired his FBI file, which indicated that agents had surveilled his parents’ Highland Park home. That FBI file, Swartz said, was “truly delightful.” At the time, it all seemed funny—the feds getting so upset over something so minor. But Malamud now believes the PACER downloads contributed to the government’s subsequent fervor in prosecuting the JSTOR case. In their eyes, Swartz was a repeat offender, a data vigilante. This was no small thing.

In 2008, Swartz started to get sick of San Francisco. He had been there for about 18 months, and increasingly found the city shallow. “When I go to coffee shops or restaurants I can’t avoid people talking about load balancers or databases,” he wrote. “The conversations are boring and obsessed with technical trivia, or worse, business antics. I don’t see people reading books—even at the library, all the people are in line for the computer terminals or the DVD rack—and people at parties seem uninterested in intellectual conversation.” At the end of spring, Swartz left the city for good and moved back to Cambridge, Mass., which he described as “the only place that’s ever felt like home.”

He spent much of his time working out of a rickety Harvard Square building called Democracy Center, which housed various liberal activists. His upstairs office had a broken balcony and a hole in the wall, which meant Swartz could hear everything going on next door. His neighbor was Ben Wikler, a political organizer and former Onion contributor who was working for a global activist organization called Avaaz. The two became friends. “I knew all these people in online activism and organizing, and I read tech blogs constantly, but I didn’t know anybody in that world. And Aaron knew all the tech bloggers,” says Wikler. “So we were each other’s tickets into the other world.”

Swartz deepened his involvement in politics, attending meetings of online activists that Wikler convened above the Hong Kong Restaurant in Harvard Square. He started experimenting with weird sleep arrangements, deciding he would start waking up at 5 a.m. (The experiment didn’t last.) He became a fellow at Harvard’s Safra Center for Ethics, studying the ways in which money influenced politics, the media, and academic and industrial research. And on Sept. 24, 2010, he purchased an Acer laptop computer, brought it over to MIT, and got ready to download some documents from the academic journal database JSTOR.

Swartz’s JSTOR scheme was different from his PACER escapade in several crucial ways. First, JSTOR is not a repository of non-copyrightable government documents. Though users with subscription access to JSTOR can grab its contents for free, it is a paid service—major research institutions pony up as much as $50,000 annually for access—that houses journal articles that are mostly under copyright. Second, Swartz wasn’t spurred by an easily identifiable, information-liberating call to action along the lines of Carl Malamud’s PACER push. There was one potential precedent: A couple of years prior, Swartz had collaborated with a Stanford law student named Shireen Barday on a project that involved downloading almost 450,000 articles from the Westlaw database and analyzing them to see who, exactly, was funding legal research. While it’s possible that Swartz was going to post his JSTOR cache on the Web, it’s also plausible that he simply planned to use the articles for research along the lines of the Shireen Barday project. We can’t be sure.

Third, Swartz seems to have made an effort to conceal his connection to the JSTOR downloads—an understandable decision, given the FBI’s interest in his PACER project. This is probably why, instead of just using Harvard’s network (where he would’ve had JSTOR access), Swartz went down the road to MIT. Once there, he connected to the MIT wireless network as a guest and ran a script on his machine that allowed him to grab a huge amount of articles. While authorized users can theoretically download as much as they want from JSTOR for free, its terms of service prohibit the use of programs to abet bulk downloading. Swartz must have known that his script would catch JSTOR’s attention, and engender suspicions that he wanted the articles for something other than personal use.

He was right. According to the government’s indictment against Swartz, these “rapid and massive downloads and download requests impaired computers used by JSTOR to provide articles to client research institutions.” JSTOR detected something was amiss and blocked Swartz’s IP address. He acquired a new one and began again. JSTOR then went ahead and blocked a range of MIT IP addresses. They also got in touch with MIT, which took steps to ban Swartz’s computer from its network. The indictment claims that Swartz again evaded their security and also got another computer, using both to download more JSTOR articles. This allegedly crashed some of JSTOR’s servers; in response, around Oct. 9, 2010, JSTOR blocked access to its database for everyone at MIT.

Around that time, Swartz ceased his downloading for about a month, most likely because he had traveled to Washington, D.C., with Wikler to volunteer with the DNC in the lead-up to the 2010 midterm elections. When he returned to Cambridge in November, he got back to downloading. This time, he decided to pre-empt any wireless IP bans by hard-wiring his Acer laptop directly into MIT’s network.

As is perhaps fitting, the buildings at MIT are known by numbers instead of names. Students take classes in Building 3 (mechanical engineering) and take their meals in Building W20 (the student center). Building 16 is one of the least essential structures on campus. It’s a connector, containing the Foreign Languages & Literatures Resource Center, the Division of Comparative Medicine, and, in the basement, a wiring and telephony closet that’s directly across from a pair of double doors emblazoned with a warning sign.

When Aaron Swartz first accessed Room 16-004t in late 2010, both the building and the closet door were unlocked. This wasn’t unusual. MIT is one of the country’s most open universities. Its signal building, the majestic Building 7, is always open to visitors. From there, you have unimpeded access to almost any part of the central campus via a labyrinthine network of hallways. It isn’t just students who come and go as they please. For years, local drama troupes used vacant classrooms as rehearsal space.

MIT’s open-door policy descends from the culture that Richard Stallman and the AI Lab created. Back when there were very few computers at MIT, some professors and administrators had a habit of locking the rooms that housed the precious terminals. Stallman and his fellow hackers, who believed the computers belonged to everyone who worked on them, considered a locked door a personal affront. As Steven Levy describes in Hackers, they went to great lengths to access the terminals—picking locks, crawling through ceilings, even employing brute force to open a door.

Three decades hence, MIT has enthusiastically co-opted the hacker legend, selling the idea that there should be no barriers to progress and innovation. This ethic is a crucial part of the university’s culture, and legendary “hacks”—elaborate pranks that date back to the 1860s—are celebrated on MIT’s admissions site and alumni pages. The “best of” list on the site hacks.mit.edu includes the time in 1994 that students “changed the inscription on the inside of Lobby 7 from ‘Established for advancement and development of science and its application to industry arts agriculture and commerce’ to ‘Established for advancement and development of science and its application to industry arts entertainment and hacking.’ ”

But while MIT students and alums relish the school’s devil-may-care image, the university has long ceased to be “open” in the way AI Lab’s hackers understood the term. The communal, socialistic beliefs of Stallman’s crew are anathema to MIT, which exists in large measure to perform research for government and industry groups. The university’s website boasts that MIT “ranks first in industry-financed research and development and development expenditures among all universities and colleges without a medical school.”

Given its reliance on government and corporate funding, MIT has an interest in appointing administrators who can speak that language. The school’s president at the time of Swartz’s JSTOR hack was Susan Hockfield, a neuroscientist by training who had shown herself willing to take a hard line—and who seemed uninterested in maintaining the university’s façade of openness. “When you’d go up to the second floor at MIT, you would pass the president’s office, and the door would be closed; you’d pass the chancellor’s office, and the door would be open,” remembers Aaron’s father Robert Swartz, who has done consulting work for the MIT Media Lab. “After [current MIT president L. Rafael] Reif took over the president’s office, the door was open. I think that was intentional symbolism.”

Multiple sources suggest that, under Hockfield, the university became much less tolerant of the kinds of incidents that, however harmless, might nonetheless damage its image. In 2007, for example, an undergraduate named Star Simpson was arrested at Logan Airport after TSA officers mistook a circuit board on her sweatshirt for a bomb. Simpson meant no harm, but the event had the potential to affect MIT’s standing with the public and with industry. MIT issued a press release decrying Simpson’s “reckless” actions, and offered her no assistance during her subsequent legal ordeal. (Hockfield later expressed regret at how the situation had been handled.)

This was MIT as of late 2010: an institution that keeps its doors unlocked, but looks askance at anyone who goes where they’re not supposed to. When MIT police learned that someone had jacked into the school’s computer system, it’s no surprise that they set up a sting operation to catch the culprit. And it wasn’t particularly surprising that the culprit turned out to be Aaron Swartz.

At 12:30 p.m. on Jan. 6, 2011, Aaron Swartz entered Room 16-004t to retrieve his laptop and external hard drive, which he had hidden beneath a box. By that point, the closet was under surveillance. Less than two hours later, according to the Cambridge Police Department arrest report, an officer located Swartz riding his bicycle. Swartz tried to get away from the cops, jumping off his bike, but he was quickly apprehended. Six months later, a federal indictment against him was unsealed, alleging that Swartz had downloaded approximately 4.8 million articles from the JSTOR database. A superseding indictment filed in 2012 would eventually ratchet up the charges, increasing the number of felony counts from four to 13.

Since childhood, Swartz had advocated for free, open access to information. This was the promise of the Semantic Web and Creative Commons. This was the ethic that drove his work with Open Library and PACER—that there was unquestioned value in making data easier to acquire and study.

In 2008, Swartz wrote something he called the Guerilla Open Access Manifesto:

Forcing academics to pay money to read the work of their colleagues? Scanning entire libraries but only allowing the folks at Google to read them? Providing scientific articles to those at elite universities in the First World, but not to children in the Global South? It’s outrageous and unacceptable.

“I agree,” many say, “but what can we do? The companies hold the copyrights, they make enormous amounts of money by charging for access, and it’s perfectly legal—there’s nothing we can do to stop them.” But there is something we can, something that’s already being done: we can fight back.

Those with access to these resources—students, librarians, scientists—you have been given a privilege. You get to feed at this banquet of knowledge while the rest of the world is locked out. But you need not—indeed, morally, you cannot—keep this privilege for yourselves. You have a duty to share it with the world.

This might not have seemed like much at the time it was written. Swartz had a habit of making provocative arguments that he may or may not have taken seriously. As a teenager, he questioned the “absurd logic” of laws that banned the distribution and possession of child pornography. In 2006, he expressed his belief that music was getting objectively better, and that Bach’s Well-Tempered Clavier may well be musically inferior to Aimee Mann’s 2005 album The Forgotten Arm.

But after his JSTOR hack, the Guerilla Open Access Manifesto became invested with meaning—the prosecutors entered the manifesto as evidence of Swartz’s intent to redistribute the downloaded articles. The government’s indictment alleges that Swartz “stole a major portion of the total archive in which JSTOR had invested” and “intended to distribute these articles through one or more file-sharing sites.” Swartz’s friends and family unanimously dispute that allegation.

The lead prosecutor was Stephen Heymann, a man who was not inclined to look kindly on Swartz’s case. In 1997, Heymann had written an article for the Harvard Journal on Legislation called “Legislating Computer Crime,” in which he argued that Congress needed to take action to address the unique challenges posed by digital-age scofflaws. “The ability of computers to perform a task millions of times in the period that it takes a human to perform the same task only once dramatically increases the harm that a particular action can cause,” Heymann wrote.

Swartz was charged under Section 1030, Title 18 of the U.S. Code, otherwise known as the Computer Fraud and Abuse Act of 1984. (He was also charged under sections 2, 981, 982, 1343, and 2461.) In that 1997 article, Heymann cited the CFAA as model legislation, calling it “a computer crime law at its purest.”

Not everyone shares Heymann’s enthusiasm for Section 1030. The law is “notoriously capacious,” Slate’s Emily Bazelon wrote earlier this year. “Prosecutors can stretch it to cover misdeeds that would otherwise barely qualify as illegal.” In 2006, for instance, a Missouri woman named Lori Drew bullied her young neighbor online; the girl, Megan Meier, ultimately committed suicide. Drew was charged by federal prosecutors under Section 1030 for violating MySpace’s terms and conditions. In an amicus brief, the Electronic Frontier Foundation argued that, though Meier’s suicide was sad and horrific, charging Drew in this manner made no sense—that this was “a dangerously overbroad construction of the CFAA,” one that “would criminalize the everyday conduct of millions of internet users.” (Drew was convicted of a misdemeanor violation of the CFAA, but the verdict was later set aside.)

Around the time of his own indictment, Aaron Swartz became interested in another law Web activists thought was out of touch with reality: the Stop Online Piracy Act. As it was being formulated in the House of Representatives, SOPA was promoted as a bill that would protect intellectual property by empowering law enforcement to shut down (and possibly arrest the proprietors of) websites that streamed or hosted copyrighted material without authorization. Big media organizations had been lobbying Congress to address copyright infringement for years. SOPA was a direct descendant of the Combating Online Infringement and Counterfeits Act, which was making its way through the Senate in 2010 before Sen. Ron Wyden killed the bill in committee. That bill was rewritten and resubmitted to the Senate in 2011 under a different name: the PROTECT IP Act, or PIPA—the sister legislation to SOPA.

When it was first introduced in October 2011, SOPA had the backing of media conglomerates and the U.S. Chamber of Commerce. But online activists quickly seized on the legislation as a dull-witted attack on the Internet at large, a broadside against online culture that would stifle creativity, favor big business at the expense of independent operators, and effectively kill the free, open, non-commercial Internet.

The protests against SOPA and PIPA cried out for Swartz’s involvement. This was the culmination of everything he had worked on and believed in: copyright reform, collaborative culture, open access to data, political activism. Despite the case against him—or perhaps because of it—Swartz decided to go on the attack.

Before his arrest, Swartz had gone to Providence, R.I., to volunteer on the congressional campaign of a young city councilman named David Segal. They lost the election but forged a friendship. Now, Segal and Swartz joined to create Demand Progress, an organization designed to “win progressive policy changes for ordinary people through organizing, and grassroots lobbying.” Swartz made speeches, brought other organizations into the fight, and built tools that made it easy for citizens to contact lawmakers and register their opposition to SOPA and PIPA.

Facing tremendous external pressure, Congress backed down in January 2012. SOPA and PIPA were dead.

Several people close to the SOPA protests believe that, without Swartz’s involvement, the bills might have passed. Holmes Wilson, whose organization Fight for the Future was instrumental in orchestrating a blackout of Wikipedia and other prominent websites, credits Swartz and his organization with spreading the anti-SOPA message to a larger audience. “Demand Progress was the first organization to build campaigns that connected those bills to entire new audiences of people who cared about tech policy just because of how much they lived on the Internet, and not because of any previous kind of commitment to the ideals of liberty online,” Wilson says.

A few months after the bills had been defeated, Swartz spoke at a conference in Washington, D.C., about the lessons of the SOPA fight.

Bills like SOPA and PIPA would return, he said:

Sure, it will have yet another name, and maybe a different excuse, and probably do its damage in a different way. But make no mistake: The enemies of the freedom to connect have not disappeared. The fire in those politicians’ eyes hasn’t been put out. There are a lot of people, a lot of powerful people, who want to clamp down on the Internet. And to be honest, there aren’t a whole lot who have a vested interest in protecting it from all of that. Even some of the biggest companies, some of the biggest Internet companies, to put it frankly, would benefit from a world in which their little competitors could get censored. We can’t let that happen.

The victory against SOPA and PIPA was real, but the end of the campaign brought Swartz back to reality. His friends had been subpoenaed. And although JSTOR had declined to prosecute, the government—with MIT’s tacit backing—continued to pursue charges against Swartz. “Stealing is stealing,” U.S. Attorney Carmen Ortiz said in a statement in July 2011, “whether you use a computer command or a crowbar, and whether you take documents, data or dollars.”

Though Swartz loved Cambridge, Mass., he felt he had to get away. In mid-2011, he started spending more time in New York City; around the same time, he grew closer to his friend Taren Stinebrickner-Kauffman. A political activist who had worked at Avaaz with Ben Wikler, Stinebrickner-Kauffman met Swartz the previous year, in D.C. They shared similar interests and vaguely similar backgrounds. (“We would joke about how the two of us would really confuse the Census,” she says. “We could be reasonably recorded as two unmarried high school dropouts living together in a one-room studio.”) Gradually, a romance bloomed in rocky terrain. “The month I started dating him he quit two jobs, broke up with his ex-girlfriend, moved from Cambridge to New York, and was indicted,” says Stinebrickner-Kauffman. “It was kind of a bad time in his life.”

They moved to New York City, renting a studio in Crown Heights, Brooklyn. With the SOPA fight over, the JSTOR case consumed Swartz’s time and energy. “When he first came to New York, he was taking buses back to Boston every Monday to show up at court at 9 a.m., and show them that he hadn’t fled,” Wikler remembers. Swartz only really discussed the case with his father and his lawyers. He worried about dragging his friends into it—he didn’t want anyone else to be subpoenaed. The stress wore on him, and on Stinebrickner-Kauffman. “He had this view that he shouldn’t rely on anyone else,” she says. “That strength meant standing alone.”

The idea of being the last honest man taking a stand against a corrupt world is an appealing one, especially to an idealist like Swartz. It meant not letting his adversaries see they were getting to him. “The semblance of normality was really important to him,” says Stinebrickner-Kauffman. As such, he continued his lifelong habit of taking on new projects, becoming a contributing editor at the Baffler, the newly revived, pro-labor journal. “Aaron was the least pretentious person I’ve ever met,” says John Summers, the Baffler’s editor. “He was willing to have hourlong conversations to help a printed magazine get back on its feet.”

But Swartz no longer had the luxury to dabble. He was using his own money to fight the charges, and he needed a cash infusion. Lawrence Lessig’s wife Bettina Neuefeind set up a legal defense fund. Swartz himself, under great duress, started contacting wealthy acquaintances to ask for help. Jeff Mayersohn, owner of the independent Harvard Book Store, recalls getting an email from his friend in August 2012. Mayersohn agreed to contribute, and suggested holding meetings and fundraisers to raise more money. Swartz demurred. “It was hard enough for me to make this simple ask of you,” Mayersohn remembers him replying.

It was becoming clear, at least, that the government’s case had some weak points. The superseding indictment, filed on Sept. 12, 2012, claimed that Swartz had “contrived to break into a restricted-access wiring closet at MIT.” But the closet door had been unlocked—and remained unlocked even after the university and authorities were aware that someone had been in there trying to access the school’s network.

The indictment went on to charge that Swartz had “contrived to … access MIT’s network without authorization from a switch within that closet” and “access JSTOR’s archive of digitized journal articles through MIT’s computer network.” But Alex Stamos, an independent expert retained by Swartz’s defense team, had prepared a strong counter-argument. Stamos argued that Swartz’s access to MIT’s network had in fact been authorized, by virtue of MIT’s lax attitude toward network security. In a blog post published after Swartz’s death, Stamos explained that MIT’s computer network was extraordinarily easy to access—“in my 12 years of professional security work I have never seen a network this open”—and that it was maintained that way on purpose in “the spirit of the MIT ethos.” The JSTOR site, he continued, “lacked even the most basic controls to prevent what they might consider abusive behavior, such as CAPTCHAs triggered on multiple downloads.”

Swartz, the indictment continued, had also “contrived to … use this access to download a substantial portion of JSTOR’s total archive onto his computers and computer hard drives,” and “avoid MIT’s and JSTOR’s efforts to prevent this massive copying, efforts that were directed at users generally and at Swartz’s illicit conduct specifically.” But these charges, too, were disputable. Stamos argued that prosecutors had also “provided no evidence that these downloads caused a negative effect on JSTOR or MIT, except due to silly overreactions such as turning off all of MIT’s JSTOR access due to downloads from a pretty easily identified user agent.”

“We had a very good expert,” says Elliot Peters, Swartz’s attorney, “and he had helped us understand how the MIT network worked, and how access to JSTOR was accomplished. And it seemed to me that it wasn’t accomplished in a way which involved any unauthorized computer access.”

Swartz hired Peters, a partner at the San Francisco firm Keker & Van Nest, in October 2012. Peters, who has also represented Lance Armstrong, was Swartz’s third different lead attorney. As the weeks went by, he only became more optimistic about Swartz’s chances, and the prospect of knocking out a bunch of the evidence that the prosecutors were planning to use at trial. “We made a bunch of suppression motions, including a motion to suppress the search of Aaron’s laptop and USB drive, because they took 34 days after they seized it to get a search warrant,” says Peters. On Dec. 14, Peters and prosecutor Stephen Heymann appeared before Judge Nathaniel M. Gorton, who set an evidentiary hearing for Jan. 25, 2013. A few days later, prosecutors released 170 megabytes of new evidence that made Swartz’s defense team even more hopeful. Included in this trove were communications between Heymann and law enforcement, extending to the time before Swartz’s arrest. Peters was confident that this new information strengthened his position.

On Jan. 9, 2013, Peters called Heymann to discuss the upcoming evidentiary hearing. “Toward the end of it,” Peters recalls, “I said ‘Can’t we find some way to make this case go away?’ I remember saying to them, ‘It’s just not right for this case to ruin Aaron’s life.’ ” The prosecutor responded with a familiar refrain: the government would never agree to a deal that didn’t include jail time, and if Swartz was convicted at trial, they would seek a guidelines sentence in the range of seven years. As usual, the defense and the prosecution could reach no common ground. The case—scheduled to go to trial on April 1, barring further delays—would continue.

On Thursday, Jan. 10, the day after this latest failure to secure a plea deal, Stinebrickner-Kauffman was coming back to New York City from a retreat upstate. Swartz texted her, asking when she was going to be home but not explaining why. When she arrived, he jumped out from behind the door and yelled, “Surprise!”

Though Stinebrickner-Kauffman was feeling tired, Swartz was in high spirits, and insisted that they go meet some friends at a Lower East Side bar called Spitzer’s Corner. Swartz treated himself to two of his favorite foods: macaroni and cheese and a grilled cheese sandwich. The mac and cheese was mediocre, but Swartz and Stinebrickner-Kauffman agreed that the grilled cheese sandwich was among the best they had ever eaten.

On the morning of Jan. 11, one week after he’d insisted it would be a great year, Swartz woke up despondent—lower than Stinebrickner-Kauffman had ever seen him. “I tried everything to get him up,” she says. “I turned on music, I opened the windows, I tickled him.” Eventually he got up and got dressed, and Stinebrickner-Kauffman thought he was going to come with her to her office. But instead, Swartz said he was going to stay home and rest. He needed to be alone. “And I asked him why he had gotten dressed,” says Stinebrickner-Kauffman. “But he didn’t answer.”

That afternoon, Peters started reading through some of the evidence that the prosecution had handed over in late December. As he read, he became more and more excited about Swartz’s chances at the Jan. 25 suppression hearing. “If we had won that motion and suppressed the fruits of their search, they wouldn’t have had a lot of the evidence they had planned to use at trial,” he says. “I ran down the hall, saying ‘Look at this! Look at all this!’ ”

Peters put the new evidence in his briefcase and got in his car. As he drove, he got a call from Bob Swartz. Aaron had committed suicide.

It’s been almost a month since Swartz hanged himself, and the initial shock has hardened into something sharper. “I’ve become so much angrier since he died,” says Wikler.

That sentiment is a common one. Swartz’s family, friends, and supporters are almost unanimous in saying that his suicide took them entirely by surprise. They do not believe Swartz was clinically depressed—he was moody and occasionally melodramatic, maybe, but not depressed. “I’ve researched clinical depression and associated disorders. I’ve read their symptoms, and at least until the last 24 hours of his life, Aaron didn’t fit them,” Stinebrickner-Kauffman wrote in a Feb. 4 blog post. “Depression is often like a bottle of ink in the bottom of your fish tank, and just everything is going to get colored black and impenetrable, no matter what goes in there,” says Wikler. “And Aaron wasn’t like that. Aaron was almost completely transparent.”

Those closest to Swartz also agree on who’s to blame for his death. “The government and MIT killed my son,” said Robert Swartz at Aaron’s funeral. Lawrence Lessig—who wrote in 2011 that Aaron’s alleged actions, if true, were “ethically wrong”—argued that he’d been “driven to the edge by what a decent society would only call bullying.”