So many interesting directions here! Before we sign off, let me offer a few comments on Nick’s thoughts. First, while I like the Capt. Kirk model as well as anyone, I have to think there’s got to be a point when our attachment to our physical selves simply becomes too inefficient. As biological organisms—“wetware”—we’re just bad fits with certain environments, such as combat or space. As you know, we’ve been working on computer-brain interfaces, in the form of chips implanted in brains, that enable smooth coupling between an individual and their (properly instrumented) environment. Experiments that began here at Arizona State University and have been continued at Duke and elsewhere have involved monkeys learning to move mechanical arms to which they are wirelessly connected as if they were part of themselves, using them effectively even when the arms (but not the monkey) are shifted up to MIT and elsewhere. More recently, monkeys with chips implanted in their brains at Duke University have kept a robot wirelessly connected to their chip running in Japan. Similar technologies are being explored to enable paraplegics and other injured people to interact with their environments and to communicate effectively, as well. The upshot is that “the body” is becoming more than just a spatial presence; rather, it becomes a designed extended cognitive network. It is conceptually a simple step to build body extensions that are wired directly into our brains, but, because they are not wetware, but hardened mechanical systems, can easily and far more efficiently go where no body part has gone before: space.

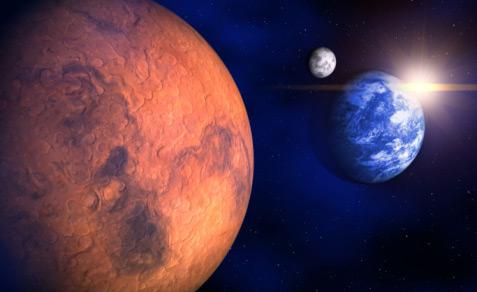

This is a bemusing prospect because it raises a number of interesting questions. How does it affect our brain, for example, if the time lag between sensory perception and core brain reception of the information is not fractions of a second, as with our current bodies, but, because our “body” is, in fact, on Mars, a lag of between three and 20-plus minutes? But perhaps more fundamental, what happens to our definition of “the human” when it begins to include remote functionality that may or may not be biological, but might increasingly be mixed (as in the existing robot with guidance provided by rat brain tissue, rather than silicon)? Is humanity on Mars when my robot extension is? What about when cognition is mixed, partially onboard the Martian Brad, and partially in my wetware brain here in Phoenix? And, something that perhaps offends our sense of specialness, what happens when it becomes clear that our robotic selves, and not our biological Cartesian selves, are the real inheritors of Space, the Final Frontier?

I also appreciate your question about Heidegger (who was, for those of you unfortunate enough to have escaped philosophy in university, deeply involved in National Socialism, although the extent to which he was active as opposed to a fellow traveler is somewhat unclear). It is always a problem with brilliant, but seriously flawed, individuals as to whether one should appropriate the thinking and the contributions, and ignore the imperfections in the vessel from whence it comes. In general, I favor the appropriation model: Let people at least contribute some good if they can. Wonderful discussion. I am going off to practice remote time-lagged cognition.

Brad