It’s no longer true that “seeing is believing.” A team of psychologists from the University of Warwick, led by Ph.D. student Sophie Nightingale, has recently published research in which respondents misidentified 40 percent of doctored images as real. The experiment showed five fake images and five real images to 707 participants, asking the respondents to classify each picture as real or fake. These images were distorted in ways that ranged from obvious to subtle. Some contained impossible shadows that implied two suns, for instance, while others had been lightly airbrushed.

Airbrushing was the most difficult change for people to detect—just 40 percent of respondents could distinguish airbrushed photos from nonairbrushed photos. When elements of a photo were added or removed, like in the puzzles section of old newspapers, close to 55 percent of respondents could tell. Changes that the authors considered “implausible”—manipulating the shadows in an image or changing the geometry of bridges and trees—tipped off roughly 60 percent of respondents. However, when all distortions were applied simultaneously, more than 80 percent of respondents were able to tell the image was “fake.”

These results could actually understate the magnitude of the problem. These tests were conducted in a lab, and participants were told some of the images were faked. Even when warned that some images were lies, 40 percent of people were cleanly fooled.

In the real world, the task is harder and the consequences are serious. On Nov. 15, 2015, Veerender Jubbal, a Canadian freelance journalist, appeared on the front page of the Spanish newspaper La Razón wearing a vest of explosives and holding a blue-and-gold Quran. The newspaper accused him of taking part in the Paris bombings. But the picture was a fake, a poorly done Photoshop that hoodwinked Twitter and the editors at a major newspaper. In reality, Jubbal was holding an iPad, not a Quran, and was wearing a button-down shirt, not a bomb jacket. A retraction was published, but Jubbal had already been slandered by the same internet gremlins that perpetuated Gamergate. For the next couple weeks, his Twitter feed was filled with death threats.

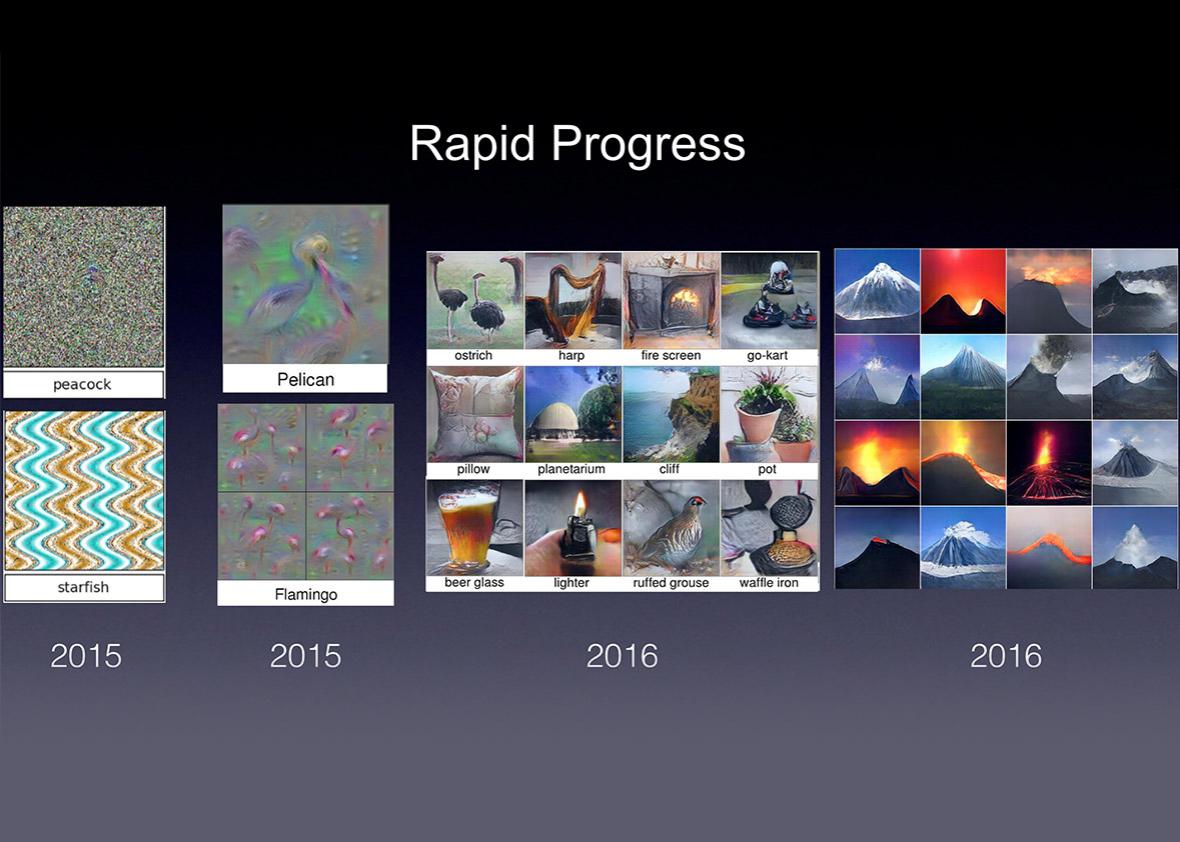

If people struggle with clear forgeries like reskinned iPads, then we are in trouble. Images are increasingly doctored by powerful algorithms like neural nets to look realistic, and these algorithms can do more than merely edit. New uses of neural networks include generating modern art that 75 percent of human subjects attribute to a fellow flesh-and-blood human. In that study, a team of researchers from Rutgers University and the College of Charleston created an A.I. intended to generate novel works of art from a given genre. To test the A.I., the researchers showed a series of images comprising real abstract expressionist art from 1945 to the present and computer-generated images. While 75 percent is an extremely high number, the A.I. isn’t a polymath painter. The authors chose abstract expressionist art because it rarely contains clear figures or interpretable subject matter, two motifs they admit computers cannot yet generate.

More recently, a team of researchers from University of Washington grafted audio of a Barack Obama speech onto a completely unrelated video of him speaking. It’s not perfect. Occasionally the audio and video are out of sync, like old dubbed kung fu movies, but it’s still a huge step forward. This technology will only improve. “It’s an arms race between the forgers and the detector,” Nightingale told me. “We need to make it hard for them. We need to find better ways to identify manipulated images.”

Jeff Clune, professor of computer science at the University of Wyoming, told me that there’s a silver lining to today’s cutting-edge methods to generate computer images: This research also automatically creates ways to detect faked images.

Anh Nguyen and Jeff Clune/University of Wyoming; Jason Yosinski/Uber AI Labs

The best models around are based on generative adversarial networks. Clune says that GAN is composed of two neural networks playing a game of cops and robbers—or cops and forgers, rather. These neural networks are commonly referred to as “deep neural networks”—they take data and combine them through a series of many transformations. For instance, GAN is often given images of tumors and then asked to predict whether they are cancerous. The high number of transformations is what makes a neural network “deep.” One network, the forger, constructs a fake image of a painting of a volcano. The other network, the cop, is tasked with judging the veracity of the forgery. If the fake passes muster, the forger becomes more confident in its strategy. However, if the cop realizes that it’s a fake, it tells the forger what it did wrong. (This is not, by the way, a great law enforcement strategy.) By fixing these mistakes, the forger creates more realistic facsimiles to fool the cop.

But this model goes both ways. Just as the cop improves the forger’s skills, the forger improves the cop’s detection. GANs, then, naturally create classifiers that can be used to tell the difference between real and fake. Clune believes that—once some technical issues have been solved—GANs could detect fake images (and, eventually, video and audio).

However, in the long term, he has an extremely pessimistic view of the arms race Nightingale mentioned. “The end state is that the counterfeiter keeps getting better, and the cop keeps getting better, and eventually the counterfeiter is generating things that are indistinguishable from real,” he said. At that point, the neural net with the cop’s badge can only throw up its hands and say game over. Clune believes that at some point, A.I.-generated speech and video will be indistinguishable from the real thing.

On the (semi)bright side, he does expect an intervening period in which it is not impossible to distinguish the real from the fake—just extremely difficult. Researchers should use this time to develop secure pipelines that take real images and video from the point in which they are captured to their delivery.

For example, every iPhone could come with a trusted chip containing a secret key, kept private from everyone in the world but Apple. When that iPhone takes a picture, it combines the image and the key to create an unforgeable signature, which it gives, along with the photo, to the photographer. The only way for the photographer to reproduce the signature is to take the exact same image. However, Apple, knowing the secret key, could reproduce the signature on command if it had the image. When photographers submitted images to a publisher—like, say, Slate—they would also include the images’ signatures. Slate could then give Apple the image along with the signature provided. If the signature Apple reproduced was different from the one submitted, the photographer is caught cheating red-handed.

This process isn’t so different from what security researchers do now. For instance, trusted platform modules are hardware that generate cryptographic keys in order to guarantee the software on a computer hasn’t been changed by a virus or malware. One day, cameras could imbue their images with secret codes to guarantee their authenticity.

In a coming world where computers can generate perfect video of any nefarious scenario, to avoid all news becoming fake news, we will have to verify before we trust.

This article is part of Future Tense, a collaboration among Arizona State University, New America, and Slate. Future Tense explores the ways emerging technologies affect society, policy, and culture. To read more, follow us on Twitter and sign up for our weekly newsletter.